Dynamic programming

A branch of mathematics studying the theory and the methods of solution of multi-step problems of optimal control.

In dynamic programming of controlled processes the objective is to find among all possible controls a control that gives the extremal (maximal or minimal) value of the objective function — some numerical characteristic of the process. A multi-stage process is one the structure of which consists of several steps, or else a process in which the control is subdivided into a sequence of successive stages (steps), which usually correspond to different moments in time. In a number of problems the multi-stage nature is dictated by the process itself (for example, in the determination of the optimal dimensions of the steps of a multi-stage rocket or in finding the most economical way of flying an aircraft), but it may also be artificially introduced in order to be able to apply methods of dynamic programming. Thus, the meaning of "programming" in dynamic programming is making decisions and planning, while the word "dynamic" indicates the importance of time and of the sequence of execution of the operations in the process and methods under study.

The methods of dynamic programming are a part of the methods of operations research, and are utilized in optimal planning problems (e.g. in problems of the optimal distribution of available resources, in the theory of inventory management, replacement of equipment, etc.), and in solving numerous technical problems (e.g. control of successive chemical processes, optimal design of structural highway layers, etc.).

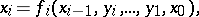

The basic idea will now be illustrated. Let the process of controlling a given system  consist of

consist of  stages; at the

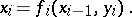

stages; at the  -th step let the control

-th step let the control  convert the system from the state

convert the system from the state  attained during the

attained during the  -th step to the new state

-th step to the new state  . This transition process is realized by a given function

. This transition process is realized by a given function  , and the new state is determined by the values

, and the new state is determined by the values  ,

,  :

:

|

Thus, the control operations  convert the system from its initial state

convert the system from its initial state  into the final state

into the final state  , where

, where  and

and  are the sets of feasible initial and final states of

are the sets of feasible initial and final states of  . A possible way of stating the extremal problem is given below. The initial state

. A possible way of stating the extremal problem is given below. The initial state  is known. The task is to select controls

is known. The task is to select controls  so that the system

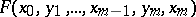

so that the system  is converted to a feasible final state and, at the same time, the objective function

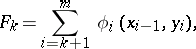

is converted to a feasible final state and, at the same time, the objective function  attains its maximal value

attains its maximal value  , i.e.

, i.e.

|

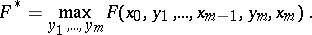

An important feature of dynamic programming is that it is applicable to additive objective functions only. In the example above, this means that

|

Another requirement of the method is the absence of "after-effects" in the problem, i.e. the solutions (controls) selected for a given step may only affect the state  of the system at the moment

of the system at the moment  . In other words, processes of the type

. In other words, processes of the type

|

are not considered. Both these restrictions may be weakened, but only by unduly complicating the method.

Conventional methods of mathematical analysis are altogether inapplicable to problems of dynamic programming or else involve very laborious computations. The method of dynamic programming is based on the optimality principle formulated by R. Bellman: Assume that, in controlling a discrete system  , a certain control on the discrete system

, a certain control on the discrete system  , and hence the trajectory of states

, and hence the trajectory of states  , have already been selected, and suppose it is required to terminate the process, i.e. to select

, have already been selected, and suppose it is required to terminate the process, i.e. to select  (and hence

(and hence  ); then, if the terminal part of the process is not optimal, i.e. if the maximum of the function

); then, if the terminal part of the process is not optimal, i.e. if the maximum of the function

|

is not attained by it, the process as a whole will not be optimal.

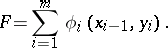

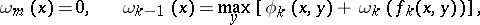

By using the optimality principle, the basic functional relationship is readily obtained. One determines the sequence of functions of the variable  :

:

|

. Here, the maximum is taken over all the control operations feasible at step

. Here, the maximum is taken over all the control operations feasible at step  . The relation defining the dependence of

. The relation defining the dependence of  on

on  is known as the Bellman equation. The meaning of the functions

is known as the Bellman equation. The meaning of the functions  is clear: If, in step

is clear: If, in step  , the system appears to be in state

, the system appears to be in state  , then

, then  is the maximum possible value of the function

is the maximum possible value of the function  . Simultaneously with the construction of the functions

. Simultaneously with the construction of the functions  one also finds the conditional optimal controls

one also finds the conditional optimal controls  at each step (i.e. the values of the optimal controls under the assumption that the system is in the state

at each step (i.e. the values of the optimal controls under the assumption that the system is in the state  at the step

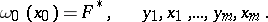

at the step  ). The final optimal controls are found by successively computing the values

). The final optimal controls are found by successively computing the values

|

The following property of the method of dynamic programming is evident from the above: It solves not merely one specific problem for a given  , but all problems of this type, for all initial states. Since the numerical realization of the method of dynamic programming is laborious, i.e. requires a large storage capacity of the computer, it is expediently employed whenever certain typical problems have to be solved a large number of times (e.g. the optimum flight conditions for an aircraft under changing weather conditions). Even though dynamic programming problems are formulated for discrete processes, the method may often be successfully employed in solving problems with continuous parameters.

, but all problems of this type, for all initial states. Since the numerical realization of the method of dynamic programming is laborious, i.e. requires a large storage capacity of the computer, it is expediently employed whenever certain typical problems have to be solved a large number of times (e.g. the optimum flight conditions for an aircraft under changing weather conditions). Even though dynamic programming problems are formulated for discrete processes, the method may often be successfully employed in solving problems with continuous parameters.

Dynamic programming furnished a novel approach to many problems of variational calculus.

An important branch of dynamic programming is constituted by stochastic problems, in which the state of the system and the objective function are affected by random factors. Such problems include, for example, optimal inventory control with allowance of random inventory replenishment. Controlled Markov processes are the most natural domains of application of dynamic programming in such cases.

The method of dynamic programming was first proposed by Bellman. Rigorous foundations of the method were laid by L.S. Pontryagin and his school, who studied the mathematical theory of control process (cf. Optimal control, mathematical theory of).

Even though the method of dynamic programming considerably simplifies the initial problems, its explicit utilization is usually very laborious. Attempts are made to overcome this difficulty by developing approximation methods.

References

| [1] | R. Bellman, "Dynamic programming" , Princeton Univ. Press (1957) |

| [2] | V.G. Boltyanskii, "Mathematical methods of optimal control" , Holt, Rinehart & Winston (1971) (Translated from Russian) |

| [3] | G.F. Hadley, "Nonlinear and dynamic programming" , Addison-Wesley (1964) |

| [4] | G. Hadley, T. Whitin, "Analysis of inventory systems" , Prentice-Hall (1963) |

| [5] | R.A. Howard, "Dynamic programming and Markov processes" , M.I.T. (1960) |

Comments

If the process to be controlled is described by a differential equation instead of a difference equation as considered above, a "continuous" version of the Bellman equation exists, but it is usually referred to as the Hamilton–Jacobi–Bellman equation (the HJB-equation). The HJB-equation is a partial differential equation. Application of the so-called "method of characteristics" solution method to this partial differential equation leads to the same differential equations as involved in the Pontryagin maximum principle. Derivation of this principle via the HJB-equation is only valid under severe restrictions. Another derivation exists, using variational principles, which is valid under weaker conditions. For a comparison of dynamic programming approaches versus the Pontryagin principle see [a1], [a2].

In the terminology of automatic control theory one could say that the dynamic programming approach leads to closed-loop solutions of the optimal control and Pontryagin's principle leads to open-loop solutions.

For some discussion of stochastic dynamic programming cf. the editorial comments to Controlled stochastic process.

References

| [a1] | A.E. Bryson, Y.-C. Ho, "Applied optimal control" , Ginn (1969) |

| [a2] | W.H. Fleming, R.W. Rishel, "Deterministic and stochastic optimal control" , Springer (1975) |

Dynamic programming. Encyclopedia of Mathematics. URL: http://encyclopediaofmath.org/index.php?title=Dynamic_programming&oldid=11658