Optimal synthesis control

A solution of a problem in the mathematical theory of optimal control (cf. Optimal control, mathematical theory of), consisting of a synthesis of an optimal control (a feedback synthesis) in the form of a control strategy (a feedback principle), as a function of the current state (position) of a process (see [1]–[3]). The value of the control is defined not only by the current time, but also by the admissible values of the current parameters. In this way the introduction of a positional strategy makes possible an a posteriori realization of a control  , corrected on the basis of supplementary information obtained during the process.

, corrected on the basis of supplementary information obtained during the process.

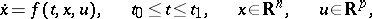

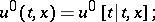

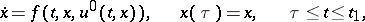

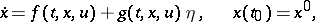

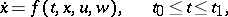

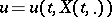

The simplest synthesis problem, for example, for a system

| (1) |

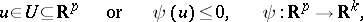

with constraints

| (2) |

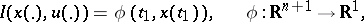

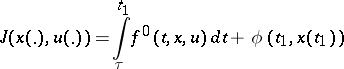

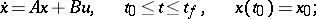

and a given "terminal" criterion

|

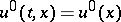

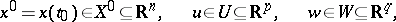

assumes that a solution  is being sought to minimize the functional

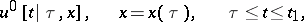

is being sought to minimize the functional  among the functions of the form

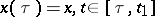

among the functions of the form  for an arbitrary initial position

for an arbitrary initial position  . The natural course is to find for every pair

. The natural course is to find for every pair  a solution of the corresponding problem of constructing an optimal programming control

a solution of the corresponding problem of constructing an optimal programming control

|

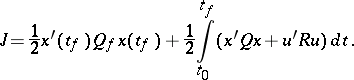

as a minimum of that same functional  and with those same constraints. It is further supposed that

and with those same constraints. It is further supposed that

|

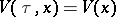

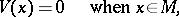

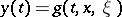

if the function  is correctly defined, while the equation

is correctly defined, while the equation

| (3) |

has a unique solution, then the synthesis problem can be solved; moreover, the optimal values of  found in the classes of programming and synthesis controls coincide (in general, conditions prevail which ensure the existence in a specific sense of the solutions of equation (3), and conditions also prevail which guarantee the optimality of all the trajectories of this equation).

found in the classes of programming and synthesis controls coincide (in general, conditions prevail which ensure the existence in a specific sense of the solutions of equation (3), and conditions also prevail which guarantee the optimality of all the trajectories of this equation).

The synthesized function  , being an optimal synthesis control, leads to an optimal solution for the minimum of the functional

, being an optimal synthesis control, leads to an optimal solution for the minimum of the functional  in the problem of optimal control for any initial position

in the problem of optimal control for any initial position  . This is in contrast to an optimal programming control, which in general depends on the fixed starting point

. This is in contrast to an optimal programming control, which in general depends on the fixed starting point  of the process. The solution of an optimal control problem in the form of an optimal synthesis control has many applications, especially in practical procedures for implementing the optimal control in the presence of limited information or perturbations in the dynamics. In these situations a synthesis control is preferable to a programming control.

of the process. The solution of an optimal control problem in the form of an optimal synthesis control has many applications, especially in practical procedures for implementing the optimal control in the presence of limited information or perturbations in the dynamics. In these situations a synthesis control is preferable to a programming control.

The search for  in the form of a function of the current state is immediately linked to dynamic programming (see [2]). The return function (Bellman function, value function)

in the form of a function of the current state is immediately linked to dynamic programming (see [2]). The return function (Bellman function, value function)  , being introduced as a minimum (maximum) of a quantity to be optimized (for example, the functional

, being introduced as a minimum (maximum) of a quantity to be optimized (for example, the functional

| (4) |

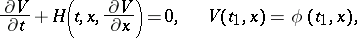

for the system (1) if  ), must satisfy the Bellman equation with boundary conditions depending on the aim of the control and

), must satisfy the Bellman equation with boundary conditions depending on the aim of the control and  . For the system (1), (2) and (4), this equation takes the form

. For the system (1), (2) and (4), this equation takes the form

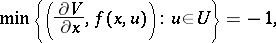

| (5) |

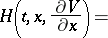

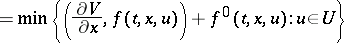

where

| (6) |

|

is the Hamilton function. This equation is connected with the equations figuring in the conditions of the Pontryagin maximum principle, in the same way as the Hamilton–Jacobi equation for a return function is linked in analytical mechanics to the ordinary Hamiltonian differential equations (see Variational principles of classical mechanics).

The derivation of equations (5) for the synthesis problem relies on the optimality principle asserting that a section of an optimal trajectory is also an optimal trajectory (see [2]). The viability of this approach depends on the correct definition of the informational properties of the process, particularly on the concept of position (current state, see [5]).

In the time-optimal control problem — regarding the minimal time  for a trajectory of an autonomous system (1) to hit a set

for a trajectory of an autonomous system (1) to hit a set  , starting from a position

, starting from a position  — the function

— the function  can be considered as a particular kind of potential

can be considered as a particular kind of potential  with respect to

with respect to  . The choice of an optimal control

. The choice of an optimal control  from conditions (5), (6) now has the form

from conditions (5), (6) now has the form

|

|

which means that  realizes the descent of the optimal trajectory

realizes the descent of the optimal trajectory  relative to the level surfaces of the function

relative to the level surfaces of the function  by the fastest method permitted by the condition

by the fastest method permitted by the condition  .

.

The use of the method of dynamic programming (as a sufficient condition of optimality) will be rigorous if the function  satisfies certain smoothness conditions everywhere (for example, in problems (3)–(6) the function

satisfies certain smoothness conditions everywhere (for example, in problems (3)–(6) the function  must be continuously differentiable) or if smoothness conditions are satisfied everywhere with the exception of a "special" set

must be continuously differentiable) or if smoothness conditions are satisfied everywhere with the exception of a "special" set  . When certain special "conditions of regular synthesis" are fulfilled, the method of dynamic programming is equivalent to Pontryagin's principle, which is then seen to be a necessary and sufficient condition for optimality (see [8]). Difficulties connected with the a priori verification of the applicability of the method of dynamic programming and with the need to solve the Bellman equation complicate the use of this method. The method of dynamic programming has been extended to problems of optimal synthesis control for discrete (multi-stage) systems, where the corresponding Bellman equation is a finite-difference equation (see [2], [9]).

. When certain special "conditions of regular synthesis" are fulfilled, the method of dynamic programming is equivalent to Pontryagin's principle, which is then seen to be a necessary and sufficient condition for optimality (see [8]). Difficulties connected with the a priori verification of the applicability of the method of dynamic programming and with the need to solve the Bellman equation complicate the use of this method. The method of dynamic programming has been extended to problems of optimal synthesis control for discrete (multi-stage) systems, where the corresponding Bellman equation is a finite-difference equation (see [2], [9]).

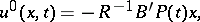

In problems of optimal control with differential constraints, the method of dynamic programming gives an effective solution of the synthesis problem in closed form for a class of problems which embraces linear systems with quadratic performance criterion (4) (the functions  ,

,  are positive-definite quadratic forms in

are positive-definite quadratic forms in  and in

and in  , respectively). This problem, related to the analytic construction of an optimal regulator if

, respectively). This problem, related to the analytic construction of an optimal regulator if  ,

,  , becomes a problem of optimal stabilization of the system (the property of asymptotic stability of the equilibrium position of a synthesized system follows directly from the existence of an admissible control) (see [10], [4]). The existence of a solution in the given instance is ensured by the property of stabilizability of the system (see [4]). For linear stationary and periodic systems it is equivalent to the property of controllability of the unstable models of the system (see Optimal programming control).

, becomes a problem of optimal stabilization of the system (the property of asymptotic stability of the equilibrium position of a synthesized system follows directly from the existence of an admissible control) (see [10], [4]). The existence of a solution in the given instance is ensured by the property of stabilizability of the system (see [4]). For linear stationary and periodic systems it is equivalent to the property of controllability of the unstable models of the system (see Optimal programming control).

The solution of the problem of optimal stabilization has shown that the corresponding Bellman function is at the same time the "optimal" Lyapunov function for the initial system with an obtained optimal control. Under these circumstances effective conditions of controllability have been obtained and a complete analogue of Lyapunov's theory of stability (in a first approximation and in critical cases) for problems of stabilization has been created which embraces ordinary quasi-linear and periodic systems and also delay-systems. In the latter case, the role of the Bellman function is played by "optimal" Lyapunov–Krasovskii functionals, given on the sections of the trajectory that correspond to the value of the delay in the system (see [4], [5]). The theory of linear, quadratic problems of optimal control is also well-developed for partial differential equations (see [11]).

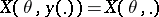

In applied problems of optimal synthesis control it is not always possible to measure all phase coordinates of the system. The following problem of observation therefore arises, permitting numerous generalizations: Knowing the realization  for an interval

for an interval  of an accessible measurement of the function

of an accessible measurement of the function  in the coordinates of the system (1) (if

in the coordinates of the system (1) (if  is known, for example, if

is known, for example, if  and

and  ), find the vector

), find the vector  at the given moment

at the given moment  . Systems which, through a unique realization

. Systems which, through a unique realization  , allow one to establish

, allow one to establish  , whatever its value, are called completely observable.

, whatever its value, are called completely observable.

The property of complete observability, as well as the construction of the corresponding analytic operations that distinguish  and the optimization of these operations, have been well studied for linear systems. Here, a duality principle is known: For every problem of observation, a corresponding equivalent two-point boundary value control problem for the dual system can be established. A consequence of this is that the property of complete observability of a linear system coincides with the property of complete controllability of the dual system with control. Moreover, it turns out that the corresponding dual boundary value problems of optimal observation and optimal control can also be composed in such a way that their solutions coincide (see [3]). The properties of controllability and observability of linear systems have many generalizations to linear infinite-dimensional systems (equations in a Banach space, systems with deviating argument, partial differential equations). There is also a number of results characterizing the corresponding properties. For non-linear systems, only some local theorems on observability are known. Solutions of the problem of observation have found numerous applications in synthesis problems with incomplete information on coordinates, among them problems of optimal stabilization (see [3]–[5], [14], [15]).

and the optimization of these operations, have been well studied for linear systems. Here, a duality principle is known: For every problem of observation, a corresponding equivalent two-point boundary value control problem for the dual system can be established. A consequence of this is that the property of complete observability of a linear system coincides with the property of complete controllability of the dual system with control. Moreover, it turns out that the corresponding dual boundary value problems of optimal observation and optimal control can also be composed in such a way that their solutions coincide (see [3]). The properties of controllability and observability of linear systems have many generalizations to linear infinite-dimensional systems (equations in a Banach space, systems with deviating argument, partial differential equations). There is also a number of results characterizing the corresponding properties. For non-linear systems, only some local theorems on observability are known. Solutions of the problem of observation have found numerous applications in synthesis problems with incomplete information on coordinates, among them problems of optimal stabilization (see [3]–[5], [14], [15]).

The problem of synthesis control becomes especially interesting when information on the controls of the controllable process, the initial conditions and the current parameters is subject to uncertainty (perturbations). If the description of this uncertainty has a statistical character, the problems of optimal control are examined within the framework of the theory of stochastic optimal control. This theory, arising from the solution of stochastic problems [16], has been, to a very large degree, developed for systems of the form

| (7) |

with random perturbations  described by Gaussian diffusion processes or by more general classes of Markov processes (the initial vector is also usually taken random). In these circumstances, as a rule, it is assumed that certain probability characteristics of the variable

described by Gaussian diffusion processes or by more general classes of Markov processes (the initial vector is also usually taken random). In these circumstances, as a rule, it is assumed that certain probability characteristics of the variable  are given (for example, information on the moments of the corresponding distributions or on the parameters of the stochastic equations describing the evolution of the process

are given (for example, information on the moments of the corresponding distributions or on the parameters of the stochastic equations describing the evolution of the process  ).

).

Generally, the use of programming and synthesis controls gives essentially different values of the optimal performance indices  (the roles of these indices can be played, for instance, by average estimates of non-negative functionals defined on trajectories of the process). The problem of synthesis of a stochastic optimal synthesis control now has clear advantages, since the continuous measurement of coordinates of the system enables one to correct the movement with regard to the real course of the random process, not predicted earlier. The method of dynamic programming combined with the theory of generating operators for the Markov semi-groups associated with stochastic processes, has led to sufficient conditions for optimality. This has led to the solution of a number of problems of stochastic optimal control on finite or infinite time intervals, including those with complete and incomplete information on current coordinates, of stochastic problems of pursuit, etc. It is essential that, for the principle of optimality to apply, the control

(the roles of these indices can be played, for instance, by average estimates of non-negative functionals defined on trajectories of the process). The problem of synthesis of a stochastic optimal synthesis control now has clear advantages, since the continuous measurement of coordinates of the system enables one to correct the movement with regard to the real course of the random process, not predicted earlier. The method of dynamic programming combined with the theory of generating operators for the Markov semi-groups associated with stochastic processes, has led to sufficient conditions for optimality. This has led to the solution of a number of problems of stochastic optimal control on finite or infinite time intervals, including those with complete and incomplete information on current coordinates, of stochastic problems of pursuit, etc. It is essential that, for the principle of optimality to apply, the control  exists at every moment of time

exists at every moment of time  as a function of "sufficient coordinates"

as a function of "sufficient coordinates"  of the process which are known to have the Markov property (see [5], [6], [17], [18]).

of the process which are known to have the Markov property (see [5], [6], [17], [18]).

It is in this way, in particular, that the theory of optimal stochastic stabilization has been developed, in conjunction with the corresponding Lyapunov theory of stability, for stochastic systems [19].

For the formulation of an optimal synthesis controller as well as for other aims of control, it is usual to evaluate the state of a stochastic system by means of measurements. The theory of stochastic filtering is about the solution of this question, given the condition that the measurement process is disturbed by probabilistic "noises" . The most complete solutions known here are for linear systems with quadratic optimality criteria (the so-called Kalman–Bucy filter, see [13]). In applying this theory to the problem of stochastic optimal synthesis control, conditions have been developed which ensure the validity of the separation principle, allowing the problem of control to be solved independently of the problem of evaluating current positions on the basis of sufficient coordinates of the process (see [20]; [18] and [21] are dedicated to more general procedures of stochastic filtering, as well as to problems of a stochastic optimal control when the control itself is selected from the class of Markov diffusion processes).

A strictly formalized solution of the problem of stochastic optimal control is invariably coupled with the problem of a correct foundation for the existence questions of solutions for the corresponding stochastic differential equations. The latter circumstance generated specific difficulties in the solution of problems of stochastic optimal control when non-classical constraints are applied.

An interesting process of dynamic optimization arises in problems of optimal synthesis control under conditions of uncertainty (see Optimal programming control). Synthesis solutions generally permit improvement of the quality of the criteria of the process, as compared to programming solutions, which are none the less the result of statistical optimization (carried out, admittedly, in a space of dynamical systems and control functions). The concepts and methods of game theory are now used to obtain a solution of these problems.

Let there be given a system

| (8) |

with constraints

|

on the initial vector  , the control

, the control  and the disturbances

and the disturbances  . Unlike the case of a coalition of players, represented by the initial control

. Unlike the case of a coalition of players, represented by the initial control  subject to definition, the disturbances

subject to definition, the disturbances  are in this case treated as controls of an opponent player, and one is allowed to examine any strategies

are in this case treated as controls of an opponent player, and one is allowed to examine any strategies  formed from any admissible information. Moreover, the aims of control can be formulated from the point of view of each one of the players separately. If the stated aims are contradictory, then the problem of conflicting control arises. Research into problems of synthesis control under conditions of conflict or uncertainty is the subject of the theory of differential games.

formed from any admissible information. Moreover, the aims of control can be formulated from the point of view of each one of the players separately. If the stated aims are contradictory, then the problem of conflicting control arises. Research into problems of synthesis control under conditions of conflict or uncertainty is the subject of the theory of differential games.

The process of forming an optimal synthesis control under conditions of uncertainty can also be complicated by incomplete information on the current state. So, in the system (8), only the results of indirect measurements of the phase vector  and the realization

and the realization  of the function

of the function

| (9) |

can be accessible; here the indefinite parameters  are restricted by an a priori known constraint,

are restricted by an a priori known constraint,  . The values

. The values  ,

,  (for a given

(for a given  ), make it possible to construct a region of information

), make it possible to construct a region of information  of states of the system (8) in the phase space, along with the realization

of states of the system (8) in the phase space, along with the realization  , equation (9) and the restriction on

, equation (9) and the restriction on  . Among the elements of

. Among the elements of  there will be also an unknown true state of the system (8), which can be estimated by choosing a point

there will be also an unknown true state of the system (8), which can be estimated by choosing a point  from

from  (for example, the "centre of gravity" or the "Chebyshev centre" of

(for example, the "centre of gravity" or the "Chebyshev centre" of  ). The study of the evolution of the regions

). The study of the evolution of the regions  and the dynamics of the vectors

and the dynamics of the vectors  is the purpose of the theory of minimax filtering. Most complete solutions are known for linear systems and convex constraints (see [22]).

is the purpose of the theory of minimax filtering. Most complete solutions are known for linear systems and convex constraints (see [22]).

In general, the choice of a synthesis strategy of optimal control under conditions of uncertainty (for example, in the form of a functional  ) must aim at control of the evolution of the domains

) must aim at control of the evolution of the domains  (i.e. the alternation of their configuration and their displacement in space), in accordance with prescribed criteria. For the problem shown, a number of general qualitative results is known, as well as constructive solutions in the class of special linear, convex problems (see [7], [22]). Furthermore, information containing measurements (for example, of the functions

(i.e. the alternation of their configuration and their displacement in space), in accordance with prescribed criteria. For the problem shown, a number of general qualitative results is known, as well as constructive solutions in the class of special linear, convex problems (see [7], [22]). Furthermore, information containing measurements (for example, of the functions  in the systems (8) and (9)), permits an a posteriori re-evaluation during the process of the domain of admissible values of the indefinite parameters in the direction of their constraint. In this way, the problem of identification of a mathematical model of a process (for example, the parameters

in the systems (8) and (9)), permits an a posteriori re-evaluation during the process of the domain of admissible values of the indefinite parameters in the direction of their constraint. In this way, the problem of identification of a mathematical model of a process (for example, the parameters  in equation (8)), is solved at the same time. All that has been said enables one to treat the solutions of the problem of optimal synthesis control under conditions of uncertainty as a procedure of adaptive optimal control, in which a more precise definition of the properties of the model of the process gets mixed up with the choice of the controls. The questions of identification of models of dynamical processes and of the problem of adaptive optimal control are studied in detail under the assumption of existence of a probabilistic description of the indefinite parameters (see [23], [24]).

in equation (8)), is solved at the same time. All that has been said enables one to treat the solutions of the problem of optimal synthesis control under conditions of uncertainty as a procedure of adaptive optimal control, in which a more precise definition of the properties of the model of the process gets mixed up with the choice of the controls. The questions of identification of models of dynamical processes and of the problem of adaptive optimal control are studied in detail under the assumption of existence of a probabilistic description of the indefinite parameters (see [23], [24]).

If in problems of optimal synthesis control under conditions of uncertainty the parameters  ,

,  are treated as "controls" of a fictitious opponent player, then the aims of the controls

are treated as "controls" of a fictitious opponent player, then the aims of the controls  and

and  can be different. The latter instance leads to a non-scalar quality criterion of the process. A consequence of this is that the corresponding problems can be considered within the framework of the concepts of equilibrium situations peculiar to the multi-criterion problems of the theory of cooperative games and their generalizations.

can be different. The latter instance leads to a non-scalar quality criterion of the process. A consequence of this is that the corresponding problems can be considered within the framework of the concepts of equilibrium situations peculiar to the multi-criterion problems of the theory of cooperative games and their generalizations.

References

| [1] | L.S. Pontryagin, V.G. Boltayanskii, R.V. Gamkrelidze, E.F. Mishchenko, "The mathematical theory of optimal processes" , Wiley (1967) (Translated from Russian) |

| [2] | R. Bellman, "Dynamic programming" , Princeton Univ. Press (1957) |

| [3] | N.N. Krasovskii, "Theory of control by motion" , Moscow (1968) (In Russian) |

| [4] | N.N. Krasovskii, "On the stabilization of dynamic systems by supplementary forces" Diff. Eq. , 1 : 1 (1965) pp. 1–9 Differentsial'nye Uravneniya , 1 : 1 (1963) pp. 5–16 |

| [5] | N.N. Krasovskii, "Theory of optimal control systems" , Mechanics in the USSR during 50 years , 1 , Moscow (1968) pp. 179–244 (In Russian) |

| [6] | N.N. Krasovskii, "On mean-square optimum stabilization at damped random perturbations" J. Appl. Math. Mech. , 25 (1961) pp. 1212–1227 Prikl. Mat. Mekh. , 25 : 5 (1961) pp. 806–817 |

| [7] | N.N. Krasovaskii, A.I. Subbotin, "Game-theoretical control problems" , Springer (1988) (Translated from Russian) |

| [8] | V.G. Boltyanskii, "Mathematical methods of optimal control" , Holt, Rinehart & Winston (1971) (Translated from Russian) |

| [9] | V.G. Boltyanskii, "Optimal control of discrete systems" , Wiley (1978) (Translated from Russian) |

| [10] | A.M. Letov, "Mathematical theory of control processes" , Moscow (1981) (In Russian) |

| [11] | J.-L. Lions, "Optimal control of systems governed by partial differential equations" , Springer (1971) (Translated from French) |

| [12] | R.E. Kalman, "On the general theory of control systems" , Proc. 1-st Internat. Congress Internat. Fed. Autom. Control , 2 , Moscow (1960) pp. 521–547 |

| [13] | R. Kalman, R. Bucy, "New results in linear filtering and prediction theory" Proc. Amer. Soc. Mech. Engineers Ser. 1.D , 83 (1961) pp. 95–108 |

| [14] | E.B. Lee, L. Marcus, "Foundations of optimal control theory" , Wiley (1967) |

| [15] | A.G. Butkovskii, "Structural theory of distributed systems" , Horwood (1983) (Translated from Russian) |

| [16] | A.N. Kolmogorov, E.F. Mishchenko, L.S. Pontryagin, "A probability problem of optimal control" Soviet Math. Dokl. , 3 : 4 (1962) pp. 1143–1145 Dokl. Akad. Nauk SSSR , 145 : 5 (1962) pp. 993–995 |

| [17] | R.S. Liptser, A.N. Shiryaev, "Statistics of random processes" , 1–2 , Springer (1977–1978) (Translated from Russian) |

| [18] | K.J. Åström, "Introduction to stochastic control theory" , Acad. Press (1970) |

| [19] | I.Ya. Kats, N.N. Krasovskii, "On the stability of systems with random parameters" J. Appl. Math. Mech. , 24 (1960) pp. 1225–1246 Prikl. Mat. Mekh. , 24 : 5 (1960) pp. 809–823 |

| [20] | W.M. Wonham, "On the separation theorem of stochastic control" SIAM J. Control , 6 (1968) pp. 312–326 |

| [21] | N.V. Krylov, "Controlled diffusion processes" , Springer (1980) (Translated from Russian) |

| [22] | A.B. Kurzhanskii, "Control and observability under conditions of uncertainty" , Moscow (1977) (In Russian) |

| [23] | Ya.Z. Tsypkin, "Foundations of the theory of learning systems" , Acad. Press (1973) (Translated from Russian) |

| [24] | P. Eikhoff, "Basics of identification of control systems" , Moscow (1975) (In Russian; translated from English) |

Comments

An optimal synthesis control is usually called an optimal closed-loop control or an optimal feedback control in the Western literature, while an optimal programming control is usually called an optimal open-loop control. See also Optimal control, mathematical theory of.

For a detailed discussion of when optimal open-loop controls can be used to find optimal closed-loop controls see [a11], [a12].

In the formulation of an optimal control problem one distinguishes problems with a terminal index, with an integral index or with a combination of both such, as expressed by equation (4). Instead of "index" , and depending on the particular application, one also speaks about "cost function" (to be minimized) or "performance index" (usually to be maximized).

In general, analytic solutions to optimal control problems do not exist. A noteable exception is the case where the system is described by a linear equation:

|

and the cost function by a quadratic equation:

|

Here  ,

,  ,

,  ,

,  ,

,  ,

,  , and

, and  are matrices of appropriate sizes. Moreover,

are matrices of appropriate sizes. Moreover,  ,

,  ,

,  , and the transpose is denoted by

, and the transpose is denoted by  . The final time is supposed to be fixed here. The solution to this optimal control problem is

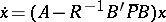

. The final time is supposed to be fixed here. The solution to this optimal control problem is

|

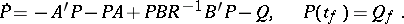

where the  -matrix

-matrix  satisfies the so-called Riccati equation;

satisfies the so-called Riccati equation;

|

If the pair  is controllable and the pair

is controllable and the pair  is observable, where the

is observable, where the  -matrix

-matrix  is defined by

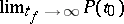

is defined by  , then

, then  exists; it will be denoted by

exists; it will be denoted by  . The

. The  solution of

solution of  is asymptotically stable. The conditions on controllability and observability can be replaced by the weaker conditions on stabilizability and detectability, respectively; see [a13]. The notions of controllability, observability, etc. are properties of the system and as such belong to the field of mathematical system theory.

is asymptotically stable. The conditions on controllability and observability can be replaced by the weaker conditions on stabilizability and detectability, respectively; see [a13]. The notions of controllability, observability, etc. are properties of the system and as such belong to the field of mathematical system theory.

Another class of problems of which the features of the optimal control function  are well understood is the class of linear time-optimal control problems. The notion of reachability set helps visualizing the optimal solution; see [a14].

are well understood is the class of linear time-optimal control problems. The notion of reachability set helps visualizing the optimal solution; see [a14].

References

| [a1] | W.H. Fleming, R.W. Rishel, "Deterministic and stochastic control" , Springer (1975) |

| [a2] | D.P. Bertsekas, S.E. Shreve, "Stochastic optimal control: the discrete-time case" , Acad. Press (1978) |

| [a3] | D.P. Bertsekas, "Dynamic programming and stochastic control" , Acad. Press (1976) |

| [a4] | M.H.A. Davis, "Martingale methods in stochastic control" , Stochastic Control and Stochastic Differential Systems , Lect. notes in control and inform. sci. , 16 , Springer (1979) pp. 85–117 |

| [a5] | L. Cesari, "Optimization - Theory and applications" , Springer (1983) |

| [a6] | L.W. Neustadt, "Optimization, a theory of necessary conditions" , Princeton Univ. Press (1976) |

| [a7] | V. Barbu, G. Da Prato, "Hamilton–Jacobi equations in Hilbert spaces" , Pitman (1983) |

| [a8] | H.J. Kushner, "Introduction to stochastic control" , Holt (1971) |

| [a9] | P.R. Kumar, P. Varaiya, "Stochastic systems: estimation, identification and adaptive control" , Prentice-Hall (1986) |

| [a10] | L. Ljung, "System identification theory for the user" , Prentice-Hall (1987) |

| [a11] | P. Brunovsky, "On the structure of optimal feedback systems" , Proc. Internat. Congress Mathematicians (Helsinki, 1978) , 2 , Acad. Sci. Fennicae (1980) pp. 841–846 |

| [a12] | H.J. Sussmann, "Analytic stratifications and control theory" , Proc. Internat. Congress Mathematicians (Helsinki, 1978) , 2 , Acad. Sci. Fennicae (1980) pp. 865–871 |

| [a13] | H. Kuakernaek, R. Sivan, "Linear optimal control systems" , Wiley (1972) |

| [a14] | H. Hermes, J.P. Lasalle, "Functional analysis and time optimal control" , Acad. Press (1969) |

| [a15] | A.E. Bryson, Y.-C. Ho, "Applied optimal control" , Ginn (1969) |

Optimal synthesis control. Encyclopedia of Mathematics. URL: http://encyclopediaofmath.org/index.php?title=Optimal_synthesis_control&oldid=16102