Normal distribution

One of the most important probability distributions. The term "normal distribution" is due to K. Pearson (earlier names are Gauss law and Gauss–Laplace distribution). It is used both in relation to probability distributions of random variables (cf. Random variable) and in relation to the joint probability distribution (cf. Joint distribution) of several random variables (that is, to distributions of finite-dimensional random vectors), as well as of random elements and stochastic processes (cf. Random element; Stochastic process). The general definition of a normal distribution reduces to the one-dimensional case.

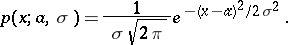

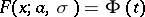

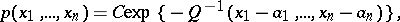

The probability distribution of a random variable  is called normal if it has probability density

is called normal if it has probability density

| (*) |

The family of normal distributions (*) depends, as a rule, on the two parameters  and

and  . Here

. Here  is the mathematical expectation of

is the mathematical expectation of  ,

,  is the variance of

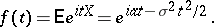

is the variance of  and the characteristic function has the form

and the characteristic function has the form

|

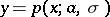

The normal density curve  is symmetric about the ordinate passing through

is symmetric about the ordinate passing through  and has there its unique maximum

and has there its unique maximum  . As

. As  decreases, the normal distribution curve becomes more and more pointed. A change in

decreases, the normal distribution curve becomes more and more pointed. A change in  with constant

with constant  does not change the shape of the curve and causes only a shift along the

does not change the shape of the curve and causes only a shift along the  -axis. The area under a normal density curve is 1. When

-axis. The area under a normal density curve is 1. When  and

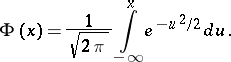

and  , the corresponding distribution function is

, the corresponding distribution function is

|

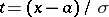

In general, the distribution function  of (*) can be computed by the formula

of (*) can be computed by the formula  , where

, where  . For

. For  (and several of its derivatives) extensive tables have been compiled (see, for example, [1], [2], and Probability integral). For a normal distribution the probability that

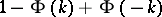

(and several of its derivatives) extensive tables have been compiled (see, for example, [1], [2], and Probability integral). For a normal distribution the probability that  is

is  and it decreases very rapidly with increasing

and it decreases very rapidly with increasing  (see the Table).'

(see the Table).'

<tbody> </tbody>

|

In many practical problems, when analyzing normal distributions one can, therefore, ignore the possibility of a deviation from  in excess of

in excess of  — the three-sigma rule; the corresponding probability, as is clear from the Table, is less than 0.003. The quartile deviation for a normal distribution is

— the three-sigma rule; the corresponding probability, as is clear from the Table, is less than 0.003. The quartile deviation for a normal distribution is  .

.

Normal distributions occur in a large number of applications. There are some noteable attempts at explaining this fact. A theoretical basis for the exceptional role of the normal distribution is given by the limit theorems of probability theory (see also Laplace theorem; Lyapunov theorem). Qualitatively, the result can be stated in the following manner: A normal distribution is a good approximation whenever the relevant random variable is the sum of a large number of independent random variables the largest of which is small in comparison with the whole sum (see Central limit theorem).

A normal distribution can also appear as an exact solution of certain problems (within the framework of an accepted mathematical model of the phenomenon). This is so in the theory of random processes (in one of the basic models of Brownian motion). Classic examples of a normal distribution arising as an exact one are due to C.F. Gauss (the law of distribution of errors of observation) and J. Maxwell (the law of distribution of velocities of molecules) (see also Independence; Characterization theorems).

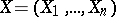

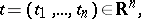

The distribution of a random vector  in

in  , or the joint distribution of random variables

, or the joint distribution of random variables  , is called normal (multivariate normal) if for any fixed

, is called normal (multivariate normal) if for any fixed  the scalar product

the scalar product  either has a normal distribution or is constant (as one sometimes says, has a normal distribution with variance zero). For random elements with values from some vector space

either has a normal distribution or is constant (as one sometimes says, has a normal distribution with variance zero). For random elements with values from some vector space  this definition is retained when

this definition is retained when  is replaced by any element

is replaced by any element  of the adjoint space

of the adjoint space  and the scalar product

and the scalar product  is replaced by a linear functional

is replaced by a linear functional  . The joint distribution of several random variables

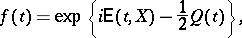

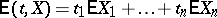

. The joint distribution of several random variables  has characteristic function

has characteristic function

|

|

where

|

is a linear form,

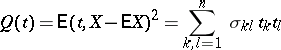

|

is a non-negative definite quadratic form, and  is the covariance matrix of

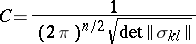

is the covariance matrix of  . In the positive-definite case the corresponding normal distribution has the probability density

. In the positive-definite case the corresponding normal distribution has the probability density

|

where  is the quadratic form inverse to

is the quadratic form inverse to  , the parameters

, the parameters  are the mathematical expectations of

are the mathematical expectations of  , respectively, and

, respectively, and

|

is constant. The total number of parameters specifying the normal distribution is

|

and grows rapidly with  (it is 2 for

(it is 2 for  , 20 for

, 20 for  , and 65 for

, and 65 for  ). A multivariate normal distribution is the basic model of multi-dimensional statistical analysis. It is also used in the theory of stochastic processes (where normal distributions in infinite-dimensional spaces are examined; see Random element, and also Wiener measure; Wiener process; Gaussian process).

). A multivariate normal distribution is the basic model of multi-dimensional statistical analysis. It is also used in the theory of stochastic processes (where normal distributions in infinite-dimensional spaces are examined; see Random element, and also Wiener measure; Wiener process; Gaussian process).

Of the important properties of normal distributions the following should be mentioned. The sum  of two independent random variables

of two independent random variables  and

and  having normal distributions also has a normal distribution; conversely, if

having normal distributions also has a normal distribution; conversely, if  has a normal distribution and

has a normal distribution and  and

and  are independent, then the distributions of

are independent, then the distributions of  and

and  are normal (Cramér's theorem). This property has a certain "stability" : If the distribution of

are normal (Cramér's theorem). This property has a certain "stability" : If the distribution of  is "close" to normal, then so are the distributions of

is "close" to normal, then so are the distributions of  and

and  . Some other important distributions are connected with normal ones (see Logarithmic normal distribution; Non-central "chi-squared" distribution; Student distribution; Wishart distribution; Fisher

. Some other important distributions are connected with normal ones (see Logarithmic normal distribution; Non-central "chi-squared" distribution; Student distribution; Wishart distribution; Fisher  -distribution; Hotelling

-distribution; Hotelling  -distribution; "Chi-squared" distribution). For an approximate representation of distributions close to normal, series like Edgeworth series and Gram–Charlier series are widely used.

-distribution; "Chi-squared" distribution). For an approximate representation of distributions close to normal, series like Edgeworth series and Gram–Charlier series are widely used.

Concerning problems connected with estimators of parameters of normal distributions using results of observations see Unbiased estimator. Concerning testing the hypothesis of normality see Non-parametric methods in statistics. See also Probability graph paper.

References

| [1] | L.N. Bol'shev, N.V. Smirnov, "Tables of mathematical statistics" , Libr. math. tables , 46 , Nauka (1983) (In Russian) (Processed by L.S. Bark and E.S. Kedrova) |

| [2] | , Tables of the normal probability integral, the normal density, and its normal derivatives , Moscow (1960) (In Russian) |

| [3] | B.V. Gnedenko, "The theory of probability" , Chelsea, reprint (1962) (Translated from Russian) |

| [4] | H. Cramér, "Mathematical methods of statistics" , Princeton Univ. Press (1946) |

| [5] | M.G. Kendall, A. Stuart, "The advanced theory of statistics" , 1. Distribution theory , Griffin (1977) |

| [6] | M.G. Kendall, A. Stuart, "The advanced theory of statistics" , 2. Inference and relationship , Griffin (1979) |

Comments

References

| [a1] | N.L. Johnson, S. Kotz, "Distributions in statistics" , 2. Continuous univariate distributions , Wiley (1970) |

| [a2] | N.L. Johnson, S. Kotz, "Distributions in statistics" , 3. Continuous multivariate distributions , Wiley (1972) |

| [a3] | E.S. Pearson, H.O. Hartley, "Biometrika tables for statisticians" , 1 , Cambridge Univ. Press (1966) |

Normal distribution. Encyclopedia of Mathematics. URL: http://encyclopediaofmath.org/index.php?title=Normal_distribution&oldid=16106