Convergence in probability

From Encyclopedia of Mathematics

Convergence of a sequence of random variables  defined on a probability space

defined on a probability space  , to a random variable

, to a random variable  , defined in the following way:

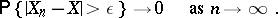

, defined in the following way:  if for any

if for any  ,

,

|

In mathematical analysis, this form of convergence is called convergence in measure. Convergence in distribution follows from convergence in probability.

Comments

See also Weak convergence of probability measures; Convergence, types of; Distributions, convergence of.

How to Cite This Entry:

Convergence in probability. Encyclopedia of Mathematics. URL: http://encyclopediaofmath.org/index.php?title=Convergence_in_probability&oldid=15590

Convergence in probability. Encyclopedia of Mathematics. URL: http://encyclopediaofmath.org/index.php?title=Convergence_in_probability&oldid=15590

This article was adapted from an original article by V.I. Bityutskov (originator), which appeared in Encyclopedia of Mathematics - ISBN 1402006098. See original article