Automatic control theory

The science dealing with methods for the determination of laws for controlling systems that can be realized by automatic devices. Historically, such methods were first applied to processes which were mainly technical in nature [1]. Thus, an aircraft in flight is a system the control laws of which ensure that it remains on the required trajectory. The laws are realized by means of a system of transducers (measuring devices) and actuators, which is known as the automatic pilot. This development was due to three reasons: many control systems had been identified by classical science (to identify a control system means to write down its mathematical model, e.g. relationships such as (1) and (2) below); long before the development of automatic control theory, thanks to the knowledge of the fundamental laws of nature, there was a well-developed mathematical apparatus of differential equations and especially an apparatus for the theory of steady motion [2]; engineers had discovered the idea of a feedback law (see below) and found means for its realization.

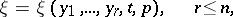

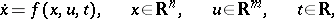

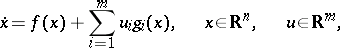

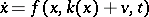

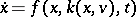

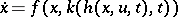

The simplest control systems are described by an ordinary (vector) differential equation

| (1) |

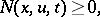

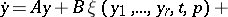

and an inequality

| (2) |

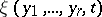

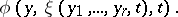

where  is the state vector of the system,

is the state vector of the system,  is the vector of controls which can be suitably chosen, and

is the vector of controls which can be suitably chosen, and  is time. Equation (1) is the mathematical representation of the laws governing the control system, while the inequality (2) establishes its domain of definition.

is time. Equation (1) is the mathematical representation of the laws governing the control system, while the inequality (2) establishes its domain of definition.

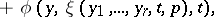

Let  be some given class of functions

be some given class of functions  (e.g. piecewise-continuous functions) whose numerical values satisfy (2). Any function

(e.g. piecewise-continuous functions) whose numerical values satisfy (2). Any function  will be called a permissible control. Equation (1) will be called a mathematical model of the control system if:

will be called a permissible control. Equation (1) will be called a mathematical model of the control system if:

1) A domain  in which the function

in which the function  is defined has been specified;

is defined has been specified;

2) A time interval  (or

(or  , if

, if  ) during which the motion

) during which the motion  is observed, has been specified;

is observed, has been specified;

3) A class of permissible controls has been specified;

4) The domain  and the function

and the function  are such that equation (1) has a unique solution defined for any

are such that equation (1) has a unique solution defined for any  ,

,  , whatever the permissible control

, whatever the permissible control  . Furthermore,

. Furthermore,  in (1) is always assumed to be smooth with respect to all arguments.

in (1) is always assumed to be smooth with respect to all arguments.

Let  be an initial and let

be an initial and let  be a (desired) final state of the control system. The state

be a (desired) final state of the control system. The state  is known as the target of the control. Automatic control theory must solve two major problems: the problem of programming, i.e. of finding those controls

is known as the target of the control. Automatic control theory must solve two major problems: the problem of programming, i.e. of finding those controls  permitting the target to be reached from

permitting the target to be reached from  ; and the determination of the feedback laws (see below). Both problems are solved under the assumption of complete controllability (1).

; and the determination of the feedback laws (see below). Both problems are solved under the assumption of complete controllability (1).

The system (1) is called completely controllable if, for any  , there is at least one permissible control

, there is at least one permissible control  and one interval

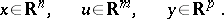

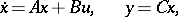

and one interval  for which the control target is attainable. If this condition is not met, one says that the object is incompletely controllable. This gives rise to a preliminary problem: Given the mathematical model (1), find the criteria of controllability. At the time of writing (1977) only insignificant progress has been made towards its solution. If equation (1) is linear

for which the control target is attainable. If this condition is not met, one says that the object is incompletely controllable. This gives rise to a preliminary problem: Given the mathematical model (1), find the criteria of controllability. At the time of writing (1977) only insignificant progress has been made towards its solution. If equation (1) is linear

| (3) |

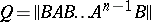

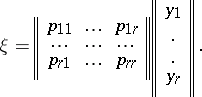

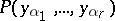

where  are stationary matrices, the criterion of complete controllability is formulated as follows: For (3) to be completely controllable it is necessary and sufficient that the rank of the matrix

are stationary matrices, the criterion of complete controllability is formulated as follows: For (3) to be completely controllable it is necessary and sufficient that the rank of the matrix

| (4) |

be  . The matrix (4) is known as the controllability matrix.

. The matrix (4) is known as the controllability matrix.

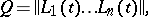

If  are known differentiable functions of

are known differentiable functions of  , the controllability matrix is given by

, the controllability matrix is given by

| (5) |

where

|

The following theorem applies to this case: For (3) to be completely controllable it is sufficient if at at least one point  the rank of the matrix (5) equals

the rank of the matrix (5) equals  [3]. Criteria of controllability for non-linear systems are unknown (up to 1977).

[3]. Criteria of controllability for non-linear systems are unknown (up to 1977).

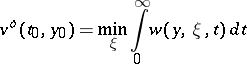

The first principal task of automatic control theory is to select the permissible controls that ensure that the target  is attained. There are two methods of solving this problem. In the first method, the chief designer of the system (1) arbitrarily determines a certain type of motion for which the target

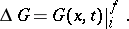

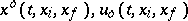

is attained. There are two methods of solving this problem. In the first method, the chief designer of the system (1) arbitrarily determines a certain type of motion for which the target  is attainable and selects a suitable control. This solution of the programming problem is in fact used in many instances. In the second method a permissible control minimizing a given cost of controls is sought. The mathematical formulation of the problem is then as follows. The data are: the mathematical model of the controlled system (1) and (2); the boundary conditions for the vector

is attainable and selects a suitable control. This solution of the programming problem is in fact used in many instances. In the second method a permissible control minimizing a given cost of controls is sought. The mathematical formulation of the problem is then as follows. The data are: the mathematical model of the controlled system (1) and (2); the boundary conditions for the vector  , which will be symbolically written as

, which will be symbolically written as

| (6) |

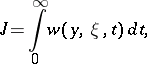

a smooth function  ; and the cost of the controls used

; and the cost of the controls used

| (7) |

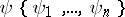

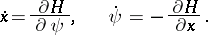

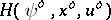

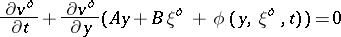

The programming problem is to find, among the permissible controls, a control satisfying conditions (6) and yielding the minimum value of the functional (7). Necessary conditions for a minimum for this non-classical variational problem are given by the L.S. Pontryagin "maximum principle" [4] (cf. Pontryagin maximum principle). An auxiliary vector  and the auxiliary scalar function

and the auxiliary scalar function

| (8) |

are introduced. The function  makes it possible to write equation (1) and an equation for the vector

makes it possible to write equation (1) and an equation for the vector  in the following form:

in the following form:

| (9) |

Equation (9) is linear and homogeneous with respect to  and has a unique continuous solution, which is defined for any initial condition

and has a unique continuous solution, which is defined for any initial condition  and

and  . The vector

. The vector  will be called a non-zero vector if at least one of its components does not vanish for

will be called a non-zero vector if at least one of its components does not vanish for  . The following theorem is true: For the curve

. The following theorem is true: For the curve  to constitute a strong minimum of the functional (7) it is necessary that a non-zero continuous vector

to constitute a strong minimum of the functional (7) it is necessary that a non-zero continuous vector  , as defined by equation (9), exists at which the function

, as defined by equation (9), exists at which the function  has a (pointwise) maximum with respect to

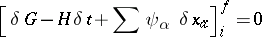

has a (pointwise) maximum with respect to  , and that the transversality condition

, and that the transversality condition

|

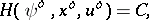

is met. Let  be solutions of the corresponding problem. It has then been shown that for stationary systems the function

be solutions of the corresponding problem. It has then been shown that for stationary systems the function  satisfies the condition

satisfies the condition

| (10) |

where  is a constant, so that (10) is a first integral. The solution

is a constant, so that (10) is a first integral. The solution  is known as a program control.

is known as a program control.

Let  be a (not necessarily optimal) program control. It was found that the knowledge of only one program control is insufficient to attain the target. This is because the program

be a (not necessarily optimal) program control. It was found that the knowledge of only one program control is insufficient to attain the target. This is because the program  is usually unstable with respect to, however small, changes in the problem, in particular to the most important changes, those in the initial and final values

is usually unstable with respect to, however small, changes in the problem, in particular to the most important changes, those in the initial and final values  or, in other words, the problem is ill-posed. However, this ill-posedness is such that it can be corrected by means of automatic stabilization, based solely on the "feedback principle" . Hence the second main task of control: the determination of feedback laws.

or, in other words, the problem is ill-posed. However, this ill-posedness is such that it can be corrected by means of automatic stabilization, based solely on the "feedback principle" . Hence the second main task of control: the determination of feedback laws.

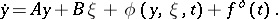

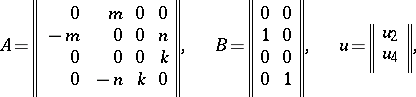

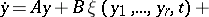

Let  be the vector of disturbed motion of the system and let

be the vector of disturbed motion of the system and let  be the vector describing the additional deflection of the control device intended to quench the disturbed motion. To realize the deflection

be the vector describing the additional deflection of the control device intended to quench the disturbed motion. To realize the deflection  a suitable control source must be provided for in advance. The disturbed motion is described by the equation:

a suitable control source must be provided for in advance. The disturbed motion is described by the equation:

| (11) |

where  and

and  are known matrices determined by the motion of

are known matrices determined by the motion of  , and are assumed to be known functions of the time;

, and are assumed to be known functions of the time;  is the non-linear part of the development of the function

is the non-linear part of the development of the function  ;

;  is the constantly acting force of perturbation, which originates either from an inaccurate determination of the programmed motion or from additional forces which were neglected in constructing the model (1). Equation (11) is defined in a neighbourhood

is the constantly acting force of perturbation, which originates either from an inaccurate determination of the programmed motion or from additional forces which were neglected in constructing the model (1). Equation (11) is defined in a neighbourhood  , where

, where  is usually quite small, but in certain cases may be any finite positive number or even

is usually quite small, but in certain cases may be any finite positive number or even  .

.

It should be noted that, in general, the fact that the system (1) is completely controllable does not mean that the system (11) is completely controllable as well.

One says that (11) is observable along the coordinates  ,

,  , if one has at his disposal a set of measuring instruments that continuously determines the coordinates at any moment of time

, if one has at his disposal a set of measuring instruments that continuously determines the coordinates at any moment of time  . The significance of this definition can be illustrated by considering the longitudinal motion of an aircraft. Even though aviation is more than 50 years old, an instrument that would measure the disturbance of the attack angle of the aircraft wing or the altitude of its flight near the ground has not yet been invented. The totality of measured coordinates is called the field of regulation and is denoted by

. The significance of this definition can be illustrated by considering the longitudinal motion of an aircraft. Even though aviation is more than 50 years old, an instrument that would measure the disturbance of the attack angle of the aircraft wing or the altitude of its flight near the ground has not yet been invented. The totality of measured coordinates is called the field of regulation and is denoted by  ,

,  .

.

Consider the totality of permissible controls  , determined over the field

, determined over the field  :

:

| (12) |

where  is a vector or a matrix parameter. One says that the control (12) represents a feedback law if the closure operation (i.e. substitution of (12) into (11)) yields the system

is a vector or a matrix parameter. One says that the control (12) represents a feedback law if the closure operation (i.e. substitution of (12) into (11)) yields the system

| (13) |

|

such that its undisturbed motion  is asymptotically stable (cf. Asymptotically-stable solution). The system (13) is said to be asymptotically stable if its undisturbed motion

is asymptotically stable (cf. Asymptotically-stable solution). The system (13) is said to be asymptotically stable if its undisturbed motion  is asymptotically stable.

is asymptotically stable.

There are two classes of problems which may be formulated in the context of the closed system (13): The class of analytic and of synthetic problems.

Consider a permissible control (12) given up to the selection of the parameter  , e.g.:

, e.g.:

|

The analytic problem: To determine the domain  of values of the parameter

of values of the parameter  for which the closed system (13) is asymptotically stable. This domain is constructed by methods developed in the theory of stability of motion (cf. Stability theory), which is extensively employed in the theory of automatic control. In particular, one may mention the methods of frequency analysis; methods based on the first approximation to Lyapunov stability theory (the theorems of Hurwitz, Routh, etc.), on the direct Lyapunov method of constructing

for which the closed system (13) is asymptotically stable. This domain is constructed by methods developed in the theory of stability of motion (cf. Stability theory), which is extensively employed in the theory of automatic control. In particular, one may mention the methods of frequency analysis; methods based on the first approximation to Lyapunov stability theory (the theorems of Hurwitz, Routh, etc.), on the direct Lyapunov method of constructing  -functions, on the Lyapunov–Poincaré theory of constructing periodic solutions, on the method of harmonic balance, on the B.V. Bulgakov method, on the A.A. Andronov method, and on the theory of point transformations of surfaces [5]. The last group of methods makes it possible not only to construct domains

-functions, on the Lyapunov–Poincaré theory of constructing periodic solutions, on the method of harmonic balance, on the B.V. Bulgakov method, on the A.A. Andronov method, and on the theory of point transformations of surfaces [5]. The last group of methods makes it possible not only to construct domains  in the space

in the space  , but also to analyze the parameters of the stable periodic solutions of equation (13) which describe the auto-oscillatory motion of the system (13). All these methods are widely employed in the practice of automatic control, and are studied in the framework of various specifications in schools of higher learning [5].

, but also to analyze the parameters of the stable periodic solutions of equation (13) which describe the auto-oscillatory motion of the system (13). All these methods are widely employed in the practice of automatic control, and are studied in the framework of various specifications in schools of higher learning [5].

If  is non-empty, a control (12) is called a feedback law or regulation law. Its realization, which is effected using a system of measuring instruments, amplifiers, converters and executing mechanisms, is known as a regulator.

is non-empty, a control (12) is called a feedback law or regulation law. Its realization, which is effected using a system of measuring instruments, amplifiers, converters and executing mechanisms, is known as a regulator.

Another problem of considerable practical importance, which is closely connected with the analytic problem, is how to construct the boundary of the domain of attraction [6], [7]. Consider the system (13) in which  . The set of values

. The set of values  containing the point

containing the point  for which the closed system (13) retains the property of asymptotic stability, is known as the domain of attraction of the trivial solution

for which the closed system (13) retains the property of asymptotic stability, is known as the domain of attraction of the trivial solution  . The problem is to determine the boundary of the domain of attraction for a given closed system (13) and a point

. The problem is to determine the boundary of the domain of attraction for a given closed system (13) and a point  .

.

Modern scientific literature does not contain effective methods of constructing the boundary of the domain of attraction, except in rare cases in which it is possible to construct unstable periodic solutions of the closed system. However, there are certain methods which allow one to construct the boundary of a set of values of  totally contained in the domain of attraction. These methods are based in most cases on the evaluation of a domain in phase space in which the Lyapunov function satisfies the condition

totally contained in the domain of attraction. These methods are based in most cases on the evaluation of a domain in phase space in which the Lyapunov function satisfies the condition  ,

,  [7].

[7].

Any solution  of the closed system (13) represents a so-called transition process. In most cases of practical importance the mere solution of the stability problem is not enough. The development of a project involves supplementary conditions of considerable practical importance, which require the transition process to have certain additional features. The nature of these requirements and the list of these features are closely connected with the physical nature of the controlled object. In analytic problems it may often be possible, by a suitable choice of the parameter

of the closed system (13) represents a so-called transition process. In most cases of practical importance the mere solution of the stability problem is not enough. The development of a project involves supplementary conditions of considerable practical importance, which require the transition process to have certain additional features. The nature of these requirements and the list of these features are closely connected with the physical nature of the controlled object. In analytic problems it may often be possible, by a suitable choice of the parameter  , to preserve the desired properties of the transition process, e.g. the pre-set regulation time

, to preserve the desired properties of the transition process, e.g. the pre-set regulation time  . The problem of choosing the parameter

. The problem of choosing the parameter  is known as the problem of quality of regulation [5], and methods for solving this problem are connected with some construction of estimates for solutions

is known as the problem of quality of regulation [5], and methods for solving this problem are connected with some construction of estimates for solutions  : either by actual integration of equation (13) or by experimental evaluation of such solutions with the aid of an analogue or digital computer.

: either by actual integration of equation (13) or by experimental evaluation of such solutions with the aid of an analogue or digital computer.

The analytic problems of transition processes have many other formulations in all cases in which  is a random function — in servomechanisms for example, [5], [8]. Other formulations are concerned with the possibility of a random alteration of the matrices

is a random function — in servomechanisms for example, [5], [8]. Other formulations are concerned with the possibility of a random alteration of the matrices  and

and  or even of the function

or even of the function  [5], [8]. This gave rise to the development of methods for studying random processes, methods of adaptation and learning machines [9]. Transition processes in systems with delay mechanisms and with distributed parameters (see [10], [11]) and with a variable structure (see [12]) have also been studied.

[5], [8]. This gave rise to the development of methods for studying random processes, methods of adaptation and learning machines [9]. Transition processes in systems with delay mechanisms and with distributed parameters (see [10], [11]) and with a variable structure (see [12]) have also been studied.

The synthesis problem: Given equation (11), a field of regulation  ,

,  and a set

and a set  of permissible controls, to find the whole set

of permissible controls, to find the whole set  of feedback laws [13]. One of the most important variant of this problem is the problem of the structure of minimal fields. A field

of feedback laws [13]. One of the most important variant of this problem is the problem of the structure of minimal fields. A field  ,

,  is called a minimal field if it contains at least one feedback law and if the dimension

is called a minimal field if it contains at least one feedback law and if the dimension  of the field is minimal. The problem is to determine the structure

of the field is minimal. The problem is to determine the structure  of all minimal fields for a given equation (11) and a set of permissible controls. The following example illustrates the nature of the problem:

of all minimal fields for a given equation (11) and a set of permissible controls. The following example illustrates the nature of the problem:

|

|

where  are given numbers. The permissible controls are the set of piecewise-continuous functions

are given numbers. The permissible controls are the set of piecewise-continuous functions  that take their values from the domain

that take their values from the domain

|

The minimal fields in this problem are either the field  or the field

or the field  . The dimension of each field is one and cannot be reduced [13].

. The dimension of each field is one and cannot be reduced [13].

So far (1977) only one method is known for the synthesis of feedback laws; it is based on Lyapunov functions [13]. A relevant theorem is that of Barbashin–Krasovskii [6], [10], [15]: In order for the undisturbed motion  of the closed system

of the closed system

| (14) |

to be asymptotically stable, it is sufficient for a positive-definite function  to exist, such that by equation (14) its complete derivative is a function

to exist, such that by equation (14) its complete derivative is a function  which is semi-definite negative, and such that on the manifold

which is semi-definite negative, and such that on the manifold  no complete trajectory of the system (14), except for

no complete trajectory of the system (14), except for  , lies. The problem of finding out about the existence and the structure of the minimal fields is of major practical importance, since these fields determine the possible requirements of the chief designer concerning the minimum weight, complexity and cost price of the control system and its maximum reliability. The problem is also of scientific and practical interest in connection with infinite-dimensional systems as encountered in technology, biology, medicine, economics and sociology.

, lies. The problem of finding out about the existence and the structure of the minimal fields is of major practical importance, since these fields determine the possible requirements of the chief designer concerning the minimum weight, complexity and cost price of the control system and its maximum reliability. The problem is also of scientific and practical interest in connection with infinite-dimensional systems as encountered in technology, biology, medicine, economics and sociology.

In designing control systems it is unfortunately impracticable to restrict the work to solving problems of synthesis of feedback laws. In most cases the requirements of the chief designer are aimed at securing certain important specific properties of the transition process in the closed system. The importance of such requirements is demonstrated by the importance of monitoring an atomic reactor. If the transition process takes more than 5 seconds or if the maximum value of some of its coordinates exceeds a certain value, an atomic explosion follows. This gives rise to new problems of synthesis of regulation laws, based on the set  . Below the formulation of one such problem is given. Consider two spheres

. Below the formulation of one such problem is given. Consider two spheres  ,

,  ,

,  ;

;  are given numerical values. Now consider the set

are given numerical values. Now consider the set  of all feedback laws. The closure by means of any of them yields the equation:

of all feedback laws. The closure by means of any of them yields the equation:

| (15) |

|

Consider the entire set of solutions  of equation (15) which start on the sphere

of equation (15) which start on the sphere  and call them

and call them  -solutions. Since the system is asymptotically stable for any

-solutions. Since the system is asymptotically stable for any  on the sphere, there exists a moment of time

on the sphere, there exists a moment of time  during which the conditions

during which the conditions

|

are valid for any  .

.

Let

|

It is clear from the definition of  that

that  exists. The interval

exists. The interval  is called the regulation time (the time of damping of the transition process) in the closed system (15) if any arbitrary

is called the regulation time (the time of damping of the transition process) in the closed system (15) if any arbitrary  -solution starts at the

-solution starts at the  -sphere if

-sphere if  , but remains inside it if

, but remains inside it if  . It is clear that the regulation time is a functional of the form

. It is clear that the regulation time is a functional of the form  . Let

. Let  be a given number. There arises the problem of synthesis of fast-acting regulators: Given a set

be a given number. There arises the problem of synthesis of fast-acting regulators: Given a set  of feedback laws, one has to isolate its subset

of feedback laws, one has to isolate its subset  on which the regulation time in a closed system satisfies the condition

on which the regulation time in a closed system satisfies the condition

|

One can formulate in a similar manner the problems of synthesis of the sets  of feedback laws, which satisfy the other

of feedback laws, which satisfy the other  requirements of the chief designer.

requirements of the chief designer.

The principal synthesis problem of satisfying all the requirements of the chief designer is solvable if the sets  have a non-empty intersection [13].

have a non-empty intersection [13].

The synthesis problem has been solved in greatest detail for the case in which the field  has maximal dimension,

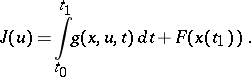

has maximal dimension,  , while the cost index of the system is characterized by the functional

, while the cost index of the system is characterized by the functional

| (16) |

where  is a positive-definite function of

is a positive-definite function of  . The problem is then known as the problem of analytic construction of optimum regulators [14] and is in fact thus formulated. The data include equation (11), a class of permissible controls

. The problem is then known as the problem of analytic construction of optimum regulators [14] and is in fact thus formulated. The data include equation (11), a class of permissible controls  defined over the field

defined over the field  of maximal dimension, and the functional (16). One is required to find a control

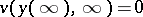

of maximal dimension, and the functional (16). One is required to find a control  for which the functional (16) assumes its minimum value. This problem is solved by the following theorem: If equation (11) is such that it is possible to find an upper semi-continuous positive-definite function

for which the functional (16) assumes its minimum value. This problem is solved by the following theorem: If equation (11) is such that it is possible to find an upper semi-continuous positive-definite function  and a function

and a function  such that the equality

such that the equality

| (17) |

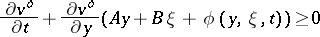

is true, and the inequality

|

is also true for all permissible  , then the function

, then the function  is a solution to the problem. Morever, the equality

is a solution to the problem. Morever, the equality

|

is true. The function  is known as the Lyapunov optimum function [15]. It is a solution of the partial differential equation (17), of Hamilton–Jacobi type, satisfying the condition

is known as the Lyapunov optimum function [15]. It is a solution of the partial differential equation (17), of Hamilton–Jacobi type, satisfying the condition  . Methods for the effective solution of such a problem have been developed for the case in which the functions

. Methods for the effective solution of such a problem have been developed for the case in which the functions  and

and  can be expanded in a convergent power series in

can be expanded in a convergent power series in  with coefficients which are bounded continuous functions of

with coefficients which are bounded continuous functions of  . Of fundamental importance is the solvability of the problem of linear approximation to equation (11) and the optimization with respect to the integral of only second-order terms contained in the development of

. Of fundamental importance is the solvability of the problem of linear approximation to equation (11) and the optimization with respect to the integral of only second-order terms contained in the development of  . This problem is solvable if the condition of complete controllability is satisfied [15].

. This problem is solvable if the condition of complete controllability is satisfied [15].

References

| [1] | D.K. Maksvell, I.A. Vishnegradskii, A. Stodola, "The theory of automatic regulation" , Moscow (1942) (In Russian) |

| [2] | N.G. Chetaev, "Stability of motion" , Moscow (1965) (In Russian) |

| [3] | N.N. Krasovskii, "Theory of control of motion" , Moscow (1968) (In Russian) |

| [4] | L.S. Pontryagin, V.G. Boltayanskii, R.V. Gamkrelidze, E.F. Mishchenko, "The mathematical theory of optimal processes" , Wiley (1962) (Translated from Russian) |

| [5] | , Technical cybernetics , Moscow (1967) |

| [6] | E.A. Barbashin, "Introduction to the theory of stability" , Wolters-Noordhoff (1970) (Translated from Russian) |

| [7] | V.I. Zubov, "Mathematical methods of studing systems of automatic control" , Leningrad (1959) (In Russian) |

| [8] | V.S. Pugachev, "Theory of random functions and its application to control problems" , Pergamon (1965) (Translated from Russian) |

| [9] | Ya.Z. Tsypkin, "Foundations of the theory of learning systems" , Acad. Press (1973) (Translated from Russian) |

| [10] | N.N. Krasovskii, "Stability of motion. Applications of Lyapunov's second method to differential systems and equations with delay" , Stanford Univ. Press (1963) (Translated from Russian) |

| [11] | V.A. Besekerskii, E.P. Popov, "The theory of automatic control systems" , Moscow (1966) (In Russian) |

| [12] | S.V. Emelyanov (ed.) , Theory of systems with a variable structure , Moscow (1970) (In Russian) |

| [13] | A.M. Letov, "Some unsolved problems in control theory" Differential equations N.Y. , 6 : 4 (1970) pp. 455–472 Differentsial'nye Uravneniya , 6 : 4 (1970) pp. 592–615 |

| [14] | A.M. Letov, "Dynamics of flight and control" , Moscow (1969) (In Russian) |

| [15] | I.G. Malkin, "Theorie der Stabilität einer Bewegung" , R. Oldenbourg , München (1959) (Translated from Russian) |

Comments

The article above reflects a different tradition and terminology than customary in the non-Russian literature. It also almost totally ignores the vast amount of important results concerning automatic (optimal) control theory that have appeared in the non-Russian literature.

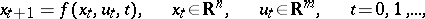

Roughly speaking a control system is a device whose future development (either in a deterministic or stochastic sense) is determined entirely by its present state and the present and future values of the control parameters. The "recipe" which determines future behaviour can be almost anything, e.g. a differential equation

| (a1) |

as in the main article above, or a difference equation

| (a2) |

or more generally, a differential equation with delays, an evolution equation in some function space, a more general partial differential equation or any combination of these. Frequently there are constraints on the values the control

or any combination of these. Frequently there are constraints on the values the control  may take, e.g.,

may take, e.g.,  where

where  is a constant. However there certainly are (engineering) situations when the controls

is a constant. However there certainly are (engineering) situations when the controls  which can be used at time

which can be used at time  depend both explicitly on time and the current state

depend both explicitly on time and the current state  of the system (which of course in turn depends on the past controls used). This may make it complicated to describe the space of admissible or permissible controls in simple terms.

of the system (which of course in turn depends on the past controls used). This may make it complicated to describe the space of admissible or permissible controls in simple terms.

It may even be not very well possible to describe the control structure in such terms as equations (a1) and constraints  defined on

defined on  . This happens, e.g., in the case of the control of an artificial satellite where the space

. This happens, e.g., in the case of the control of an artificial satellite where the space  in which the controls take their value may depend on the state

in which the controls take their value may depend on the state  , being, e.g., the tangent space to a sphere at the point

, being, e.g., the tangent space to a sphere at the point  . Then controls become sections in a vector bundle usually subject to further size constraints.

. Then controls become sections in a vector bundle usually subject to further size constraints.

At this point some of the natural and traditional problems concern reachability, and controllability and state space feedback. Give an initial state  the control system is said to be completely reachable (from

the control system is said to be completely reachable (from  ) if for all states

) if for all states  there is an admissible control steering

there is an admissible control steering  to

to  . This is termed complete controllability in the article above. Controllability in the Western literature is usually reserved for the opposite notion: Given a (desired) final state

. This is termed complete controllability in the article above. Controllability in the Western literature is usually reserved for the opposite notion: Given a (desired) final state  the system is said to be completely controllable (to

the system is said to be completely controllable (to  ) if for every possible initial state

) if for every possible initial state  there is a control which steers

there is a control which steers  to

to  . In the case of continuous-time, time-invariant, finite-dimensional systems the two notions coincide, but this is not always the case.

. In the case of continuous-time, time-invariant, finite-dimensional systems the two notions coincide, but this is not always the case.

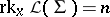

There are also many result on reachability and controllability for non-linear systems, especially for local controllability and reachability. These results often are stated in terms of Lie algebras associated to the control system. E.g., for a control system of the form

| (a3) |

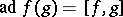

one considers the Lie algebra  spanned by the vector fields

spanned by the vector fields  ,

,  ;

;  where

where  ,

,  . Given a Lie algebra of vector fields

. Given a Lie algebra of vector fields  on a manifold

on a manifold  the rank of

the rank of  at a point

at a point  ,

,  , is the dimension of the space of tangent vectors

, is the dimension of the space of tangent vectors  . A local reachability result now says that

. A local reachability result now says that  implies local reachability at

implies local reachability at  . There are also various global and necessary-condition-type results especially in the case of analytic systems. Cf. [a2], [a10], [a14] for a first impression of the available results.

. There are also various global and necessary-condition-type results especially in the case of analytic systems. Cf. [a2], [a10], [a14] for a first impression of the available results.

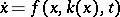

Given a control system (a1) an important general question concerns to what extent it can be changed by feedback, in this case state space feedback. This means the following. A state space feedback law is a suitable mapping  ,

,  . Inserting this in (a1) results in the closed loop system

. Inserting this in (a1) results in the closed loop system  . Cf. Fig. a.

. Cf. Fig. a.

Figure: a014090a

Often "new" controls  are also introduced and one considers, e.g. the new control system

are also introduced and one considers, e.g. the new control system  or, more generally,

or, more generally,  . Dynamic feedback laws in which the function

. Dynamic feedback laws in which the function  is replaced by a complete input-output system (cf. below)

is replaced by a complete input-output system (cf. below)

| (a4) |

are also frequently considered. Important questions now are, e.g., whether for a given system (a1) a  or

or  or system (a4) of various kinds can be found such that the resulting closed loop system is stable. Or, whether by means of feedback a system can be linearized or imbedded in a linear system (4). Cf. [a6] for a selection of results.

or system (a4) of various kinds can be found such that the resulting closed loop system is stable. Or, whether by means of feedback a system can be linearized or imbedded in a linear system (4). Cf. [a6] for a selection of results.

Using (large) controls in engineering situations can be expensive. This leads to the idea of a control system with cost functional  which is often given in terms such as

which is often given in terms such as

|

There result optimal control questions such as finding that admissible function  which minimizes

which minimizes  (and steers

(and steers  to the target set) (optimal open loop control) and finding a minimizing feedback control law

to the target set) (optimal open loop control) and finding a minimizing feedback control law  (optimal closed loop control). In practice the case of a linear system (4) with a quadratic criterion is very important, the so called

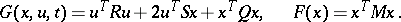

(optimal closed loop control). In practice the case of a linear system (4) with a quadratic criterion is very important, the so called  problem. Then

problem. Then

| (a5) |

Here the upper  denotes transpose and

denotes transpose and  are suitable matrices (which may depend on time as may

are suitable matrices (which may depend on time as may  and

and  ). In this case under suitable positive-definiteness assumptions on

). In this case under suitable positive-definiteness assumptions on  and the block matrix

and the block matrix

|

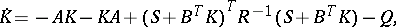

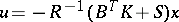

the optimal solution exists. It is of feedback type and is obtained as follows. Consider the matrix Riccati equation

| (a6) |

|

Solve it backwards up to time  . Then the optimal control is given by

. Then the optimal control is given by

| (a7) |

The solution (method) extends to the case where the linear system is in addition subject to Gaussian stochastic disturbances, cf. [a9], [a17].

Also for non-linear systems there are synthesis results for optimal (feedback) control. One can, for instance, use the Pontryagin maximum principle to determine the optimal (open loop) control for each initial state  (if it exists and is unique). This yields a mapping

(if it exists and is unique). This yields a mapping  which is a candidate for an optimal feedback control law and under suitable regularity assumptions this is indeed the case, cf. [a3], [a15]. Some standard treatises on optimal control in various settings are [a5], [a12], [a13].

which is a candidate for an optimal feedback control law and under suitable regularity assumptions this is indeed the case, cf. [a3], [a15]. Some standard treatises on optimal control in various settings are [a5], [a12], [a13].

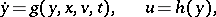

In many situations it cannot be assumed that the state  of a control system (a1) is directly accessible for control purposes, e.g. for the implementation of a feedback law. More generally only certain derived quantities are immediately observable. (Think for example of the various measuring devices in an aircraft as compared to the complete state description of the aircraft.) This leads to the idea of an input-output (dynamical) system, or briefly (dynamical) system, also called plant (cf. Fig. b),

of a control system (a1) is directly accessible for control purposes, e.g. for the implementation of a feedback law. More generally only certain derived quantities are immediately observable. (Think for example of the various measuring devices in an aircraft as compared to the complete state description of the aircraft.) This leads to the idea of an input-output (dynamical) system, or briefly (dynamical) system, also called plant (cf. Fig. b),

| (a8) |

|

Figure: a014090b

Here the  are viewed as controls or inputs and the

are viewed as controls or inputs and the  as observations or outputs. Let

as observations or outputs. Let  denote the solution of the first equation of (a8) for the initial condition

denote the solution of the first equation of (a8) for the initial condition  and a given

and a given  . The system (a8) is called completely observable if for any two initial states

. The system (a8) is called completely observable if for any two initial states  and (known) control

and (known) control  there holds that

there holds that  for all

for all  implies

implies  . This is more or less what is meant by the phrase "observability along coordinates93B07observable along the coordinates y1…yn" in the main article above. In the case of a time-invariant linear system

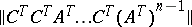

. This is more or less what is meant by the phrase "observability along coordinates93B07observable along the coordinates y1…yn" in the main article above. In the case of a time-invariant linear system

| (a9) |

complete observability holds only if and only the (block) observability matrix  is of full rank

is of full rank  . This is completely dual to the reachability (controlability) result for linear systems in the article above.

. This is completely dual to the reachability (controlability) result for linear systems in the article above.

In this setting of input-output systems many of the problems indicated above acquire an output analogue, e.g. output feedback stabilization, where (in the simplest case) a function  is sought such that

is sought such that  is (asymptotically) stable, the dynamic output feedback problem and the optimal output feedback control problem. In addition new natural questions arise such as whether it is possible by means of some kind of feedback to make certain outputs independent of certain inputs (decoupling problems). In case that there are additional stochastic disturbances possibly both in the evolution of

is (asymptotically) stable, the dynamic output feedback problem and the optimal output feedback control problem. In addition new natural questions arise such as whether it is possible by means of some kind of feedback to make certain outputs independent of certain inputs (decoupling problems). In case that there are additional stochastic disturbances possibly both in the evolution of  and in the measurements

and in the measurements  problems of filtering are added to all this. E.g. the problem of finding the best estimate

problems of filtering are added to all this. E.g. the problem of finding the best estimate  of the state of the system given the observations

of the state of the system given the observations  ,

,  , [a9].

, [a9].

There is also the so-called realization problem. Given an initial state  a system such as (a8) defines a mapping from a space of input functions

a system such as (a8) defines a mapping from a space of input functions  to a space of output functions

to a space of output functions  , and the question arises which mappings can be realized by means of systems such as (a8). Cf. [a6] for a survey of results in this direction in the non-linear deterministic case and [a9] for results in the linear stochastic case.

, and the question arises which mappings can be realized by means of systems such as (a8). Cf. [a6] for a survey of results in this direction in the non-linear deterministic case and [a9] for results in the linear stochastic case.

With the notable exception of the output feedback problem one can say at the present time that the theory of linear time-invariant finite-dimensional systems, possibly with Gaussian noise and quadratic cost criteria, is in a highly-satisfactory state. Cf., e.g., the standard treatise [a11]. Generalization of this substantial body of results to a more general setting seems to require sophisticated mathematics, e.g. algebraic geometry and algebraic  -theory in the case of families of linear systems [a8], [a16], functional analysis and contraction semi-groups for infinite-dimensional linear systems [a4], functional analysis, interpolation theory and Fourier analysis for filtering and prediction [a9], and foliations, vector bundles, Lie algebras of vector fields and other notions from differential topology and geometry for non-linear systems theory [a2], [a9].

-theory in the case of families of linear systems [a8], [a16], functional analysis and contraction semi-groups for infinite-dimensional linear systems [a4], functional analysis, interpolation theory and Fourier analysis for filtering and prediction [a9], and foliations, vector bundles, Lie algebras of vector fields and other notions from differential topology and geometry for non-linear systems theory [a2], [a9].

A great deal of research at the moment is concerned with systems with unknown (or uncertain) parameters. Here adaptive control is important. This means that one attempts to design e.g. output feedback control laws which automatically adjust themselves to the unknown parameters.

A good idea of the current state of the art of system and control theory can be obtained by studying the proceedings of the yearly IEEE CDC (Institute of Electronic and Electrical Engineers Conference on Decision and Control) conferences and the biyearly MTNS (Mathematical Theory of Networks and Systems) conferences.

References

| [a1] | S. Barnett, "Introduction to mathematical control theory" , Oxford Univ. Press (1975) |

| [a2] | R.W. Brockett, "Nonlinear systems and differential geometry" Proc. IEEE , 64 (1976) pp. 61–72 |

| [a3] | P. Brunovsky, "On the structure of optimal feedback systems" , Proc. Internat. Congress Mathematicians (Helsinki, 1978) , 2 , Acad. Sci. Fennicae (1980) pp. 841–846 |

| [a4] | R.F. Curtain, A.J. Pritchard, "Infinite-dimensional linear system theory" , Springer (1978) |

| [a5] | W.H. Fleming, R.W. Rishel, "Deterministic and stochastic optimal control" , Springer (1975) |

| [a6] | M. Fliess (ed.) M. Hazewinkel (ed.) , Algebraic and geometric methods in nonlinear control theory , Reidel (1986) |

| [a7] | M. Hazewinkel, "On mathematical control engineering" Gazette des Math. , 28, July (1985) pp. 133–151 |

| [a8] | M. Hazewinkel, "(Fine) moduli spaces for linear systems: what are they and what are they good for" C.I. Byrnes (ed.) C.F. Martin (ed.) , Geometric methods for linear system theory , Reidel (1980) pp. 125–193 |

| [a9] | M. Hazewinkel (ed.) J.C. Willems (ed.) , Stochastic systems: the mathematics of filtering and identification , Reidel (1981) |

| [a10] | V. Jurdjevic, I. Kupka, "Control systems on semi-simple Lie groups and their homogeneous spaces" Ann. Inst. Fourier , 31 (1981) pp. 151–179 |

| [a11] | H. Kwakernaak, R. Sivan, "Linear optimal control systems" , Wiley (1972) |

| [a12] | L. Markus, "Foundations of optimal control theory" , Wiley (1967) |

| [a13] | J.-L. Lions, "Optimal control of systems governed by partial differential equations" , Springer (1971) (Translated from French) |

| [a14] | C. Lohry, "Controlabilité des systèmes non-linéaires" , Outils et modèles mathematiques pour l'automatique, l'analyse des systémes et le traitement du signal , 1 , CNRS (1981) pp. 187–214 |

| [a15] | H.J. Sussmann, "Analytic stratifications and control theory" , Proc. Internat. Congress Mathematicians (Helsinki, 1978) , 2 , Acad. Sci. Fennicae (1980) pp. 865–871 |

| [a16] | A. Tannenbaum, "Invariance and system theory: algebraic and geometric aspects" , Springer (1981) |

| [a17] | J.C. Willems, "Recursive filtering" Statistica Neerlandica , 32 (1978) pp. 1–39 |

| [a18] | G. Wunsch (ed.) , Handbuch der Systemtheorie , Akademie Verlag (1986) |

Automatic control theory. Encyclopedia of Mathematics. URL: http://encyclopediaofmath.org/index.php?title=Automatic_control_theory&oldid=14989