Difference between revisions of "Sequential analysis"

Ulf Rehmann (talk | contribs) m (tex encoded by computer) |

Ulf Rehmann (talk | contribs) m (Undo revision 48674 by Ulf Rehmann (talk)) Tag: Undo |

||

| Line 1: | Line 1: | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

{{MSC|62L10}} | {{MSC|62L10}} | ||

| Line 17: | Line 5: | ||

A branch of mathematical statistics. Its characteristic feature is that the number of observations to be performed (the moment of termination of the observations) is not fixed in advance but is chosen in the course of the experiment depending upon the results that are obtained. The intensive development and application of sequential methods in statistics was due to the work of A. Wald. He established that in the problem of decision (based on the results of independent observations) between two simple hypotheses the so-called sequential probability ratio test gives a considerable improvement in terms of the average number of observations required in comparison with the most-powerful test for deciding between two hypotheses (determined by the Neyman–Pearson lemma) for a fixed sample size and the same error probabilities. | A branch of mathematical statistics. Its characteristic feature is that the number of observations to be performed (the moment of termination of the observations) is not fixed in advance but is chosen in the course of the experiment depending upon the results that are obtained. The intensive development and application of sequential methods in statistics was due to the work of A. Wald. He established that in the problem of decision (based on the results of independent observations) between two simple hypotheses the so-called sequential probability ratio test gives a considerable improvement in terms of the average number of observations required in comparison with the most-powerful test for deciding between two hypotheses (determined by the Neyman–Pearson lemma) for a fixed sample size and the same error probabilities. | ||

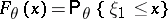

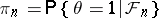

| − | The basic principles of sequential analysis consist of the following. Let | + | The basic principles of sequential analysis consist of the following. Let <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s0846001.png" /> be a sequence of independent identically-distributed random variables with distribution function <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s0846002.png" /> which depends on an unknown parameter <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s0846003.png" /> belonging to some parameter set <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s0846004.png" />. The problem consists of making a certain decision as to the true value of the unknown parameter <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s0846005.png" />, based on the results of observations. |

| − | be a sequence of independent identically-distributed random variables with distribution function | ||

| − | which depends on an unknown parameter | ||

| − | belonging to some parameter set | ||

| − | The problem consists of making a certain decision as to the true value of the unknown parameter | ||

| − | based on the results of observations. | ||

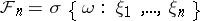

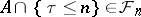

| − | A space | + | A space <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s0846006.png" /> of terminal final decisions <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s0846007.png" /> (for the values of the parameter <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s0846008.png" />) and a termination rule <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s0846009.png" /> which determines the time when the observations are stopped and the terminal decision is taken, lie at the basis of any statistical decision problem. In classical methods the time <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460010.png" /> is non-random and is fixed in advance; in sequential methods <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460011.png" /> is a random variable independent of the "future" (a Markovian time, a stopping time). Formally, let <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460012.png" /> be the <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460013.png" />-algebra generated by the random variables <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460014.png" />. A random variable <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460015.png" /> assuming the values <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460016.png" /> is called a Markovian time if the event <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460017.png" /> for any <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460018.png" /> (<img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460019.png" />). Let <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460020.png" /> be the family of all measurable sets <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460021.png" /> such that <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460022.png" /> for any <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460023.png" /> (cf. also [[Markov moment|Markov moment]]). If <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460024.png" /> is interpreted as the set of events observed up to some random time <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460025.png" /> (inclusive), then <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460026.png" /> can be interpreted as the set of events observed up to the time <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460027.png" /> (inclusive). A terminal decision <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460028.png" /> is an <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460029.png" />-measurable function with values in <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460030.png" />. A pair <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460031.png" /> of such functions is called a (sequential) decision rule. |

| − | of terminal final decisions | ||

| − | for the values of the parameter | ||

| − | and a termination rule | ||

| − | which determines the time when the observations are stopped and the terminal decision is taken, lie at the basis of any statistical decision problem. In classical methods the time | ||

| − | is non-random and is fixed in advance; in sequential methods | ||

| − | is a random variable independent of the "future" (a Markovian time, a stopping time). Formally, let | ||

| − | be the | ||

| − | algebra generated by the random variables | ||

| − | A random variable | ||

| − | assuming the values | ||

| − | is called a Markovian time if the event | ||

| − | for any | ||

| − | |||

| − | Let | ||

| − | be the family of all measurable sets | ||

| − | such that | ||

| − | for any | ||

| − | cf. also [[Markov moment|Markov moment]]). If | ||

| − | is interpreted as the set of events observed up to some random time | ||

| − | inclusive), then | ||

| − | can be interpreted as the set of events observed up to the time | ||

| − | inclusive). A terminal decision | ||

| − | is an | ||

| − | measurable function with values in | ||

| − | A pair | ||

| − | of such functions is called a (sequential) decision rule. | ||

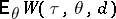

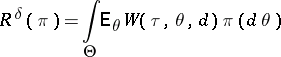

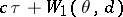

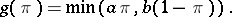

| − | In order to choose the "optimal" decision rule among all decision rules one usually defines a risk function | + | In order to choose the "optimal" decision rule among all decision rules one usually defines a risk function <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460032.png" /> and considers the mathematical expectation <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460033.png" />. There are different approaches to defining the optimal decision rule <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460034.png" />. One of them, the Bayesian approach, is based on the assumption that the parameter <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460035.png" /> is a random variable with a priori distribution <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460036.png" />. Then one can speak of the <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460037.png" />-risk |

| − | and considers the mathematical expectation | ||

| − | There are different approaches to defining the optimal decision rule | ||

| − | One of them, the Bayesian approach, is based on the assumption that the parameter | ||

| − | is a random variable with a priori distribution | ||

| − | Then one can speak of the | ||

| − | risk | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460038.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | |||

| − | and one calls a rule | + | and one calls a rule <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460039.png" /> the optimal Bayesian (or <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460041.png" />-optimal) solution if <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460043.png" /> for any other (admissible) rule. The most widely used form of the risk function <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460044.png" /> is <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460045.png" />, where the constant <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460046.png" /> is interpreted as the cost of a unit observation and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460047.png" /> is a loss function resulting from the terminal decision. |

| − | the optimal Bayesian (or | ||

| − | optimal) solution if | ||

| − | for any other (admissible) rule. The most widely used form of the risk function | ||

| − | is | ||

| − | where the constant | ||

| − | is interpreted as the cost of a unit observation and | ||

| − | is a loss function resulting from the terminal decision. | ||

| − | In Bayesian problems it is usually not difficult to find the terminal solution | + | In Bayesian problems it is usually not difficult to find the terminal solution <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460048.png" />; thus the main efforts are concentrated on finding the optimal termination time <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460049.png" />. Moreover, the majority of problems of sequential analysis fall into the following pattern of "optimal termination rules" . |

| − | thus the main efforts are concentrated on finding the optimal termination time | ||

| − | Moreover, the majority of problems of sequential analysis fall into the following pattern of "optimal termination rules" . | ||

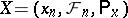

| − | Let | + | Let <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460050.png" />, <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460051.png" />, <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460052.png" />, be a Markov chain in the state space <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460053.png" />, where <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460054.png" /> is the state of the chain at the time <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460055.png" />, the <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460056.png" />-algebra <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460057.png" /> is interpreted as the set of events observed up to the time <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460058.png" /> (inclusive), and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460059.png" /> is a probability distribution corresponding to the initial state <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460060.png" />. It is assumed that by stopping the observation at the time <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460061.png" /> one obtains the gain <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460062.png" />. Then the average gain resulting from termination at time <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460063.png" /> is <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460064.png" />, where <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460065.png" /> is the initial state. The function <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460066.png" />, where the supremum is taken over all (finite) termination times <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460067.png" />, is called the cost, and the time <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460068.png" /> for which <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460069.png" /> for all <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460070.png" /> is called the <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460072.png" />-optimal stopping time. <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460073.png" />-optimal times are called optimal. The main problems in the theory of "optimal stopping rules" are the following: What is the structure of the cost <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460074.png" />, how to find it, when do <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460075.png" />-optimal and optimal times exist, and what is their structure? One of the typical results concerning these questions is discussed below. |

| − | |||

| − | |||

| − | be a Markov chain in the state space | ||

| − | where | ||

| − | is the state of the chain at the time | ||

| − | the | ||

| − | algebra | ||

| − | is interpreted as the set of events observed up to the time | ||

| − | inclusive), and | ||

| − | is a probability distribution corresponding to the initial state | ||

| − | It is assumed that by stopping the observation at the time | ||

| − | one obtains the gain | ||

| − | Then the average gain resulting from termination at time | ||

| − | is | ||

| − | where | ||

| − | is the initial state. The function | ||

| − | where the supremum is taken over all (finite) termination times | ||

| − | is called the cost, and the time | ||

| − | for which | ||

| − | for all | ||

| − | is called the | ||

| − | optimal stopping time. | ||

| − | optimal times are called optimal. The main problems in the theory of "optimal stopping rules" are the following: What is the structure of the cost | ||

| − | how to find it, when do | ||

| − | optimal and optimal times exist, and what is their structure? One of the typical results concerning these questions is discussed below. | ||

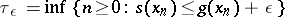

| − | Let the function | + | Let the function <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460076.png" /> be bounded: <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460077.png" />. Then the cost <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460078.png" /> is the least excessive majorant of <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460079.png" />, i.e. the smallest of the functions <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460080.png" /> satisfying the two following conditions: |

| − | be bounded: | ||

| − | Then the cost | ||

| − | is the least excessive majorant of | ||

| − | i.e. the smallest of the functions | ||

| − | satisfying the two following conditions: | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460081.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | |||

| − | where | + | where <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460082.png" />. Moreover, |

| − | Moreover, | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460083.png" /></td> </tr></table> | |

| − | |||

| − | s | ||

| − | |||

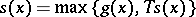

| − | is the | + | is the <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460084.png" />-optimal time for any <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460085.png" />, the cost <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460086.png" /> satisfies the Wald–Bellman equation |

| − | optimal time for any | ||

| − | the cost | ||

| − | satisfies the Wald–Bellman equation | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460087.png" /></td> </tr></table> | |

| − | |||

| − | |||

and can be obtained by the formula | and can be obtained by the formula | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460088.png" /></td> </tr></table> | |

| − | s | ||

| − | |||

where | where | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460089.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | In the case when the set | + | In the case when the set <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460090.png" /> is finite, the time |

| − | is finite, the time | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460091.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | is optimal. In the general case the time | + | is optimal. In the general case the time <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460092.png" /> is optimal if <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460093.png" />, <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460094.png" />. |

| − | is optimal if | ||

| − | |||

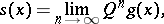

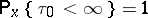

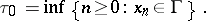

Let | Let | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460095.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | |||

| − | . | ||

| − | |||

By definition, | By definition, | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460096.png" /></td> </tr></table> | |

| − | |||

| − | . | ||

| − | |||

| − | In other words, one should stop the observations upon hitting the set | + | In other words, one should stop the observations upon hitting the set <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460097.png" /> for the first time. Accordingly, the set <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460098.png" /> is called the set of continuation of observations and the set <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s08460099.png" /> is called the set of termination of observations. |

| − | for the first time. Accordingly, the set | ||

| − | is called the set of continuation of observations and the set | ||

| − | is called the set of termination of observations. | ||

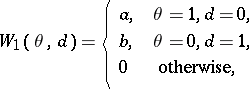

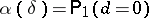

| − | These results can be illustrated by the problem of deciding between two simple hypotheses, for which Wald has demonstrated the advantage of sequential methods as compared to classical ones. Let the parameter | + | These results can be illustrated by the problem of deciding between two simple hypotheses, for which Wald has demonstrated the advantage of sequential methods as compared to classical ones. Let the parameter <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600100.png" /> take two values 1 and 0 with a priori probabilities <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600101.png" /> and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600102.png" />, respectively, and let the set of termination decisions <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600103.png" /> consist of two points as well: <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600104.png" /> (accept hypothesis <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600105.png" />: <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600106.png" />) and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600107.png" /> (accept hypothesis <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600108.png" />: <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600109.png" />). If the function <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600110.png" /> is chosen in the form |

| − | take two values 1 and 0 with a priori probabilities | ||

| − | and | ||

| − | respectively, and let the set of termination decisions | ||

| − | consist of two points as well: | ||

| − | accept hypothesis | ||

| − | |||

| − | and | ||

| − | accept hypothesis | ||

| − | |||

| − | If the function | ||

| − | is chosen in the form | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600111.png" /></td> </tr></table> | |

| − | |||

| − | |||

and one puts | and one puts | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600112.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | |||

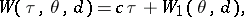

then the expression | then the expression | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600113.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | |||

| − | is obtained for | + | is obtained for <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600114.png" />, where |

| − | where | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600115.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | |||

| − | are the error probabilities of the first and second kinds, and | + | are the error probabilities of the first and second kinds, and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600116.png" /> denotes the probability distribution in the space of observations corresponding to the a priori distribution <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600117.png" />. If <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600118.png" /> is the a posteriori probability of the hypothesis <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600119.png" />: <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600120.png" /> with respect to the <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600121.png" />-algebra <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600122.png" />, then |

| − | denotes the probability distribution in the space of observations corresponding to the a priori distribution | ||

| − | If | ||

| − | is the a posteriori probability of the hypothesis | ||

| − | |||

| − | with respect to the | ||

| − | algebra | ||

| − | then | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600123.png" /></td> </tr></table> | |

| − | |||

| − | |||

where | where | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600124.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | From the general theory of optimal stopping rules applied to | + | From the general theory of optimal stopping rules applied to <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600125.png" /> it follows that the function <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600126.png" /> satisfies the equation |

| − | it follows that the function | ||

| − | satisfies the equation | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600127.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | Hence, by virtue of concavity of the functions | + | Hence, by virtue of concavity of the functions <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600128.png" />, <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600129.png" />, <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600130.png" />, it follows that there are two numbers <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600131.png" /> such that the domain of continuation of observations is <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600132.png" />, and the domain of termination of observations is <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600133.png" />. Here, the termination time |

| − | |||

| − | |||

| − | it follows that there are two numbers | ||

| − | such that the domain of continuation of observations is | ||

| − | and the domain of termination of observations is | ||

| − | Here, the termination time | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600134.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | is optimal | + | is optimal <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600135.png" />. |

| − | If | + | If <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600136.png" /> and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600137.png" /> are the densities of the distributions <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600138.png" /> and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600139.png" /> (with respect to the measure <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600140.png" />), and |

| − | and | ||

| − | are the densities of the distributions | ||

| − | and | ||

| − | with respect to the measure | ||

| − | and | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600141.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | |||

| − | |||

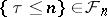

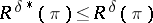

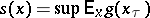

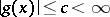

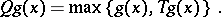

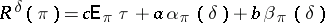

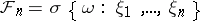

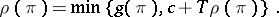

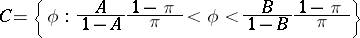

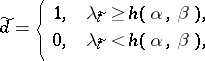

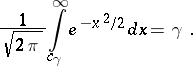

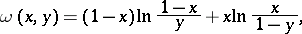

is the likelihood ratio, then the domain of continuation of observations (see Fig. a) can be written in the form | is the likelihood ratio, then the domain of continuation of observations (see Fig. a) can be written in the form | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600142.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | and | + | and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600143.png" />. <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600144.png" />: domain of continuation of observations <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600145.png" />: domain of termination of observations |

| − | |||

| − | domain of continuation of observations | ||

| − | domain of termination of observations | ||

<img style="border:1px solid;" src="https://www.encyclopediaofmath.org/legacyimages/common_img/s084600a.gif" /> | <img style="border:1px solid;" src="https://www.encyclopediaofmath.org/legacyimages/common_img/s084600a.gif" /> | ||

| Line 295: | Line 103: | ||

Figure: s084600a | Figure: s084600a | ||

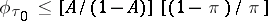

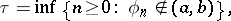

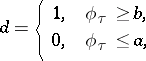

| − | In addition, if | + | In addition, if <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600146.png" />, then the decision <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600147.png" /> is taken, i.e. the hypothesis <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600148.png" />: <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600149.png" /> is accepted. If, however, <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600150.png" />, then the hypothesis <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600151.png" />: <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600152.png" /> is accepted. The structure of this optimal decision rule is the same also for the problem of decision between two hypotheses formulated in terms of a conditional extremum in the following way. For each decision rule <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600153.png" /> one introduces the error probabilities <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600154.png" />, <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600155.png" /> and fixes two numbers <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600156.png" /> and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600157.png" />; let, further, <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600158.png" /> be the set of all decision rules when <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600159.png" />, <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600160.png" />, and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600161.png" />, <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600162.png" />. The following fundamental result was obtained by Wald. If <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600163.png" /> and if among all criteria <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600164.png" /> based on the likelihood ratio <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600165.png" /> and of the form |

| − | then the decision | ||

| − | is taken, i.e. the hypothesis | ||

| − | |||

| − | is accepted. If, however, | ||

| − | then the hypothesis | ||

| − | |||

| − | is accepted. The structure of this optimal decision rule is the same also for the problem of decision between two hypotheses formulated in terms of a conditional extremum in the following way. For each decision rule | ||

| − | one introduces the error probabilities | ||

| − | |||

| − | and fixes two numbers | ||

| − | |||

| − | let, further, | ||

| − | be the set of all decision rules when | ||

| − | |||

| − | and | ||

| − | |||

| − | The following fundamental result was obtained by Wald. If | ||

| − | and if among all criteria | ||

| − | based on the likelihood ratio | ||

| − | and of the form | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600166.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | |||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600167.png" /></td> </tr></table> | |

| − | |||

| − | there are | + | there are <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600168.png" /> and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600169.png" /> such that the error probabilities of the first and second kinds are exactly <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600170.png" /> and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600171.png" />, then the decision rule <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600172.png" /> with <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600173.png" /> and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600174.png" /> is optimal in the class <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600175.png" /> in the sense that |

| − | and | ||

| − | such that the error probabilities of the first and second kinds are exactly | ||

| − | and | ||

| − | then the decision rule | ||

| − | with | ||

| − | and | ||

| − | is optimal in the class | ||

| − | in the sense that | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600176.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | |||

| − | for all | + | for all <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600177.png" />. |

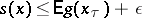

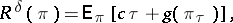

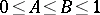

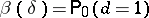

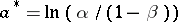

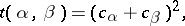

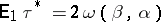

| − | The advantage of the sequential decision rule | + | The advantage of the sequential decision rule <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600178.png" /> as compared to the classical one is easier to illustrate by the example of decision between two hypotheses <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600179.png" />: <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600180.png" /> and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600181.png" />: <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600182.png" /> with respect to the local average value <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600183.png" /> of a Wiener process <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600184.png" /> with unit diffusion. The optimal sequential decision rule <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600185.png" /> providing the given probabilities <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600186.png" /> and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600187.png" /> of errors of the first and second kinds, respectively, is described as follows: |

| − | as compared to the classical one is easier to illustrate by the example of decision between two hypotheses | ||

| − | |||

| − | and | ||

| − | |||

| − | with respect to the local average value | ||

| − | of a Wiener process | ||

| − | with unit diffusion. The optimal sequential decision rule | ||

| − | providing the given probabilities | ||

| − | and | ||

| − | of errors of the first and second kinds, respectively, is described as follows: | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600188.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | |||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600189.png" /></td> </tr></table> | |

| − | |||

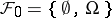

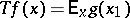

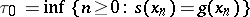

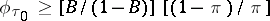

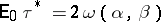

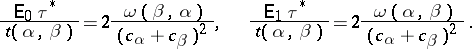

| − | where | + | where <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600190.png" /> and the likelihood ratio (the density of the measure corresponding to <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600191.png" /> with respect to the measure corresponding to <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600192.png" />) equals <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600193.png" />, and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600194.png" />, <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600195.png" /> (see Fig. b). <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600196.png" />: domain of continuation of observations <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600197.png" />: domain of termination of observations |

| − | and the likelihood ratio (the density of the measure corresponding to | ||

| − | with respect to the measure corresponding to | ||

| − | equals | ||

| − | and | ||

| − | |||

| − | see Fig. b). | ||

| − | domain of continuation of observations | ||

| − | domain of termination of observations | ||

<img style="border:1px solid;" src="https://www.encyclopediaofmath.org/legacyimages/common_img/s084600b.gif" /> | <img style="border:1px solid;" src="https://www.encyclopediaofmath.org/legacyimages/common_img/s084600b.gif" /> | ||

| Line 376: | Line 127: | ||

Figure: s084600b | Figure: s084600b | ||

| − | The optimal classical rule | + | The optimal classical rule <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600198.png" /> (using the Neyman–Pearson lemma) is described in the following way: |

| − | using the Neyman–Pearson lemma) is described in the following way: | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600199.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600200.png" /></td> </tr></table> | |

| − | |||

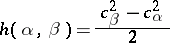

where | where | ||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600201.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600202.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | + | and <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600203.png" /> is the solution of the equation | |

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600204.png" /></td> </tr></table> | |

| − | |||

| − | + | Since <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600205.png" />, <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600206.png" />, where | |

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600207.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

one has | one has | ||

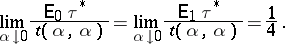

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600208.png" /></td> </tr></table> | |

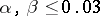

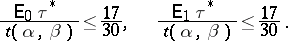

| − | + | Numerical calculations show that for <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600209.png" />, | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600210.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | + | In other words, for the values of errors of the first and the second kinds considered, the optimal sequential method of decision between two hypotheses needs approximately half the observations of the optimal method with a fixed number of observations. Moreover, if <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600211.png" />, then | |

| − | + | <table class="eq" style="width:100%;"> <tr><td valign="top" style="width:94%;text-align:center;"><img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/s/s084/s084600/s084600212.png" /></td> </tr></table> | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

====References==== | ====References==== | ||

| Line 477: | Line 169: | ||

====Comments==== | ====Comments==== | ||

| + | |||

====References==== | ====References==== | ||

Revision as of 14:53, 7 June 2020

2020 Mathematics Subject Classification: Primary: 62L10 [MSN][ZBL]

A branch of mathematical statistics. Its characteristic feature is that the number of observations to be performed (the moment of termination of the observations) is not fixed in advance but is chosen in the course of the experiment depending upon the results that are obtained. The intensive development and application of sequential methods in statistics was due to the work of A. Wald. He established that in the problem of decision (based on the results of independent observations) between two simple hypotheses the so-called sequential probability ratio test gives a considerable improvement in terms of the average number of observations required in comparison with the most-powerful test for deciding between two hypotheses (determined by the Neyman–Pearson lemma) for a fixed sample size and the same error probabilities.

The basic principles of sequential analysis consist of the following. Let  be a sequence of independent identically-distributed random variables with distribution function

be a sequence of independent identically-distributed random variables with distribution function  which depends on an unknown parameter

which depends on an unknown parameter  belonging to some parameter set

belonging to some parameter set  . The problem consists of making a certain decision as to the true value of the unknown parameter

. The problem consists of making a certain decision as to the true value of the unknown parameter  , based on the results of observations.

, based on the results of observations.

A space  of terminal final decisions

of terminal final decisions  (for the values of the parameter

(for the values of the parameter  ) and a termination rule

) and a termination rule  which determines the time when the observations are stopped and the terminal decision is taken, lie at the basis of any statistical decision problem. In classical methods the time

which determines the time when the observations are stopped and the terminal decision is taken, lie at the basis of any statistical decision problem. In classical methods the time  is non-random and is fixed in advance; in sequential methods

is non-random and is fixed in advance; in sequential methods  is a random variable independent of the "future" (a Markovian time, a stopping time). Formally, let

is a random variable independent of the "future" (a Markovian time, a stopping time). Formally, let  be the

be the  -algebra generated by the random variables

-algebra generated by the random variables  . A random variable

. A random variable  assuming the values

assuming the values  is called a Markovian time if the event

is called a Markovian time if the event  for any

for any  (

( ). Let

). Let  be the family of all measurable sets

be the family of all measurable sets  such that

such that  for any

for any  (cf. also Markov moment). If

(cf. also Markov moment). If  is interpreted as the set of events observed up to some random time

is interpreted as the set of events observed up to some random time  (inclusive), then

(inclusive), then  can be interpreted as the set of events observed up to the time

can be interpreted as the set of events observed up to the time  (inclusive). A terminal decision

(inclusive). A terminal decision  is an

is an  -measurable function with values in

-measurable function with values in  . A pair

. A pair  of such functions is called a (sequential) decision rule.

of such functions is called a (sequential) decision rule.

In order to choose the "optimal" decision rule among all decision rules one usually defines a risk function  and considers the mathematical expectation

and considers the mathematical expectation  . There are different approaches to defining the optimal decision rule

. There are different approaches to defining the optimal decision rule  . One of them, the Bayesian approach, is based on the assumption that the parameter

. One of them, the Bayesian approach, is based on the assumption that the parameter  is a random variable with a priori distribution

is a random variable with a priori distribution  . Then one can speak of the

. Then one can speak of the  -risk

-risk

|

and one calls a rule  the optimal Bayesian (or

the optimal Bayesian (or  -optimal) solution if

-optimal) solution if  for any other (admissible) rule. The most widely used form of the risk function

for any other (admissible) rule. The most widely used form of the risk function  is

is  , where the constant

, where the constant  is interpreted as the cost of a unit observation and

is interpreted as the cost of a unit observation and  is a loss function resulting from the terminal decision.

is a loss function resulting from the terminal decision.

In Bayesian problems it is usually not difficult to find the terminal solution  ; thus the main efforts are concentrated on finding the optimal termination time

; thus the main efforts are concentrated on finding the optimal termination time  . Moreover, the majority of problems of sequential analysis fall into the following pattern of "optimal termination rules" .

. Moreover, the majority of problems of sequential analysis fall into the following pattern of "optimal termination rules" .

Let  ,

,  ,

,  , be a Markov chain in the state space

, be a Markov chain in the state space  , where

, where  is the state of the chain at the time

is the state of the chain at the time  , the

, the  -algebra

-algebra  is interpreted as the set of events observed up to the time

is interpreted as the set of events observed up to the time  (inclusive), and

(inclusive), and  is a probability distribution corresponding to the initial state

is a probability distribution corresponding to the initial state  . It is assumed that by stopping the observation at the time

. It is assumed that by stopping the observation at the time  one obtains the gain

one obtains the gain  . Then the average gain resulting from termination at time

. Then the average gain resulting from termination at time  is

is  , where

, where  is the initial state. The function

is the initial state. The function  , where the supremum is taken over all (finite) termination times

, where the supremum is taken over all (finite) termination times  , is called the cost, and the time

, is called the cost, and the time  for which

for which  for all

for all  is called the

is called the  -optimal stopping time.

-optimal stopping time.  -optimal times are called optimal. The main problems in the theory of "optimal stopping rules" are the following: What is the structure of the cost

-optimal times are called optimal. The main problems in the theory of "optimal stopping rules" are the following: What is the structure of the cost  , how to find it, when do

, how to find it, when do  -optimal and optimal times exist, and what is their structure? One of the typical results concerning these questions is discussed below.

-optimal and optimal times exist, and what is their structure? One of the typical results concerning these questions is discussed below.

Let the function  be bounded:

be bounded:  . Then the cost

. Then the cost  is the least excessive majorant of

is the least excessive majorant of  , i.e. the smallest of the functions

, i.e. the smallest of the functions  satisfying the two following conditions:

satisfying the two following conditions:

|

where  . Moreover,

. Moreover,

|

is the  -optimal time for any

-optimal time for any  , the cost

, the cost  satisfies the Wald–Bellman equation

satisfies the Wald–Bellman equation

|

and can be obtained by the formula

|

where

|

In the case when the set  is finite, the time

is finite, the time

|

is optimal. In the general case the time  is optimal if

is optimal if  ,

,  .

.

Let

|

By definition,

|

In other words, one should stop the observations upon hitting the set  for the first time. Accordingly, the set

for the first time. Accordingly, the set  is called the set of continuation of observations and the set

is called the set of continuation of observations and the set  is called the set of termination of observations.

is called the set of termination of observations.

These results can be illustrated by the problem of deciding between two simple hypotheses, for which Wald has demonstrated the advantage of sequential methods as compared to classical ones. Let the parameter  take two values 1 and 0 with a priori probabilities

take two values 1 and 0 with a priori probabilities  and

and  , respectively, and let the set of termination decisions

, respectively, and let the set of termination decisions  consist of two points as well:

consist of two points as well:  (accept hypothesis

(accept hypothesis  :

:  ) and

) and  (accept hypothesis

(accept hypothesis  :

:  ). If the function

). If the function  is chosen in the form

is chosen in the form

|

and one puts

|

then the expression

|

is obtained for  , where

, where

|

are the error probabilities of the first and second kinds, and  denotes the probability distribution in the space of observations corresponding to the a priori distribution

denotes the probability distribution in the space of observations corresponding to the a priori distribution  . If

. If  is the a posteriori probability of the hypothesis

is the a posteriori probability of the hypothesis  :

:  with respect to the

with respect to the  -algebra

-algebra  , then

, then

|

where

|

From the general theory of optimal stopping rules applied to  it follows that the function

it follows that the function  satisfies the equation

satisfies the equation

|

Hence, by virtue of concavity of the functions  ,

,  ,

,  , it follows that there are two numbers

, it follows that there are two numbers  such that the domain of continuation of observations is

such that the domain of continuation of observations is  , and the domain of termination of observations is

, and the domain of termination of observations is  . Here, the termination time

. Here, the termination time

|

is optimal  .

.

If  and

and  are the densities of the distributions

are the densities of the distributions  and

and  (with respect to the measure

(with respect to the measure  ), and

), and

|

is the likelihood ratio, then the domain of continuation of observations (see Fig. a) can be written in the form

|

and  .

.  : domain of continuation of observations

: domain of continuation of observations  : domain of termination of observations

: domain of termination of observations

Figure: s084600a

In addition, if  , then the decision

, then the decision  is taken, i.e. the hypothesis

is taken, i.e. the hypothesis  :

:  is accepted. If, however,

is accepted. If, however,  , then the hypothesis

, then the hypothesis  :

:  is accepted. The structure of this optimal decision rule is the same also for the problem of decision between two hypotheses formulated in terms of a conditional extremum in the following way. For each decision rule

is accepted. The structure of this optimal decision rule is the same also for the problem of decision between two hypotheses formulated in terms of a conditional extremum in the following way. For each decision rule  one introduces the error probabilities

one introduces the error probabilities  ,

,  and fixes two numbers

and fixes two numbers  and

and  ; let, further,

; let, further,  be the set of all decision rules when

be the set of all decision rules when  ,

,  , and

, and  ,

,  . The following fundamental result was obtained by Wald. If

. The following fundamental result was obtained by Wald. If  and if among all criteria

and if among all criteria  based on the likelihood ratio

based on the likelihood ratio  and of the form

and of the form

|

|

there are  and

and  such that the error probabilities of the first and second kinds are exactly

such that the error probabilities of the first and second kinds are exactly  and

and  , then the decision rule

, then the decision rule  with

with  and

and  is optimal in the class

is optimal in the class  in the sense that

in the sense that

|

for all  .

.

The advantage of the sequential decision rule  as compared to the classical one is easier to illustrate by the example of decision between two hypotheses

as compared to the classical one is easier to illustrate by the example of decision between two hypotheses  :

:  and

and  :

:  with respect to the local average value

with respect to the local average value  of a Wiener process

of a Wiener process  with unit diffusion. The optimal sequential decision rule

with unit diffusion. The optimal sequential decision rule  providing the given probabilities

providing the given probabilities  and

and  of errors of the first and second kinds, respectively, is described as follows:

of errors of the first and second kinds, respectively, is described as follows:

|

|

where  and the likelihood ratio (the density of the measure corresponding to

and the likelihood ratio (the density of the measure corresponding to  with respect to the measure corresponding to

with respect to the measure corresponding to  ) equals

) equals  , and

, and  ,

,  (see Fig. b).

(see Fig. b).  : domain of continuation of observations

: domain of continuation of observations  : domain of termination of observations

: domain of termination of observations

Figure: s084600b

The optimal classical rule  (using the Neyman–Pearson lemma) is described in the following way:

(using the Neyman–Pearson lemma) is described in the following way:

|

|

where

|

|

and  is the solution of the equation

is the solution of the equation

|

Since  ,

,  , where

, where

|

one has

|

Numerical calculations show that for  ,

,

|

In other words, for the values of errors of the first and the second kinds considered, the optimal sequential method of decision between two hypotheses needs approximately half the observations of the optimal method with a fixed number of observations. Moreover, if  , then

, then

|

References

| [W] | A. Wald, "Sequential analysis" , Wiley (1947) MR0020764 Zbl 0041.26303 Zbl 0029.15805 |

| [Sh] | A.N. Shiryaev, "Statistical sequential analysis" , Amer. Math. Soc. (1973) (Translated from Russian) MR0445744 MR0293789 Zbl 0267.62039 |

| [Sh2] | A.N. Shiryaev, "Optimal stopping rules" , Springer (1978) (Translated from Russian) MR2374974 MR1317981 MR0445744 MR0293789 MR0283922 Zbl 0391.60002 |

Comments

References

| [Si] | D. Siegmund, "Sequential analysis" , Springer (1985) MR0799155 Zbl 0573.62071 |

| [L] | R. Lerche, "Boundary crossing of Brownian motion: its relation to the law of the iterated logarithm and to sequential analysis" , Springer (1986) Zbl 0604.62075 |

Sequential analysis. Encyclopedia of Mathematics. URL: http://encyclopediaofmath.org/index.php?title=Sequential_analysis&oldid=48674