Difference between revisions of "Probability theory"

(→References: Gnedenko: internal link) |

(refs format) |

||

| Line 204: | Line 204: | ||

====References==== | ====References==== | ||

| − | + | {| | |

| − | + | |valign="top"|{{Ref|Brnl}}|| J. Bernoulli, "Ars conjectandi" , Basle (1713) {{MR|2349550}} {{MR|2393219}} {{MR|0935946}} {{MR|0850992}} {{MR|0827905}} {{ZBL|0957.01032}} {{ZBL|0694.01020}} {{ZBL|30.0210.01}} | |

| − | + | |- | |

| − | + | |valign="top"|{{Ref|Mo}}|| A. de Moivre, "Doctrine of chances" , Paris (1756) {{MR|}} {{ZBL|0153.30801}} | |

| − | + | |- | |

| + | |valign="top"|{{Ref|La}}|| P.S. Laplace, "Théorie analytique des probabilités" , Paris (1812) {{MR|2274728}} {{MR|1400403}} {{MR|1400402}} {{ZBL|1047.01534}} {{ZBL|1047.01533}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|Ch}}|| P.L. Chebyshev, "Oeuvres de P.L. Chebyshev", Chelsea, reprint (1961) | ||

| + | |- | ||

| + | |valign="top"|{{Ref|Lia}}|| A.M. Liapounoff, "Nouvelle forme du théorème sur la limite de probabilité" , St. Petersburg (1901) | ||

| + | |- | ||

| + | |valign="top"|{{Ref|Ma}}|| A.A. Markov, "Studies on a remarkable case of dependent trials" ''Izv. Akad. Nauk SSSR Ser. 6'' , '''1''' (1907) (In Russian) | ||

| + | |- | ||

| + | |valign="top"|{{Ref|Ma2}}|| A.A. Markov, "Wahrscheinlichkeitsrechung" , Teubner (1912) (Translated from Russian) {{MR|}} {{ZBL|39.0292.02}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|Brnsh}}|| S.N. Bernshtein, "Probability theory" , Moscow-Leningrad (1946) (In Russian) {{MR|1868030}} {{ZBL|}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|G}}|| B.V. Gnedenko, [[Gnedenko, "A course in the theory of probability"|"The theory of probability"]], Chelsea, reprint (1962) (Translated from Russian) | ||

| + | |- | ||

| + | |valign="top"|{{Ref|Bo}}|| A.A. Borovkov, "Wahrscheinlichkeitstheorie" , Birkhäuser (1976) (Translated from Russian) {{MR|0410818}} {{ZBL|}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|Fe}}|| W. Feller, [[Feller, "An introduction to probability theory and its applications"|"An introduction to probability theory and its applications"]], '''1–2''' , Wiley (1957–1971) | ||

| + | |- | ||

| + | |valign="top"|{{Ref|Po}}|| H. Poincaré, "Calcul des probabilités" , Gauthier-Villars (1912) {{MR|0924852}} {{MR|1190693}} {{ZBL|43.0308.04}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|Mi}}|| R. von Mises, "Wahrscheinlichkeitsrechnung und ihre Anwendung in der Statistik und theoretischen Physik" , Wien (1931) {{MR|}} {{ZBL|0002.27701}} {{ZBL|57.0605.14}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|GK}}|| B.V. Gnedenko, A.N. Kolmogorov, "Probability theory" , ''Mathematics in the USSR during thirty years: 1917–1947'' , Moscow-Leningrad (1948) pp. 701–727 (In Russian) {{MR|1791851}} {{MR|1666260}} {{MR|1785765}} {{MR|1185485}} {{MR|0993962}} {{MR|0993956}} {{MR|0918766}} {{MR|0884522}} {{MR|1032004}} {{MR|0767301}} {{MR|0707275}} {{MR|0694048}} {{MR|0443006}} {{MR|0532056}} {{MR|0277014}} {{MR|0158418}} {{MR|0154305}} {{MR|0152411}} {{MR|0177425}} {{MR|0139186}} {{ZBL|05904374}} {{ZBL|1181.01046}} {{ZBL|0901.60001}} {{ZBL|0917.60002}} {{ZBL|0744.60001}} {{ZBL|0683.60064}} {{ZBL|0669.60082}} {{ZBL|0645.60001}} {{ZBL|0709.60001}} {{ZBL|0658.60001}} {{ZBL|0619.01014}} {{ZBL|0543.60001}} {{ZBL|0532.60001}} {{ZBL|0523.60001}} {{ZBL|0507.60024}} {{ZBL|0523.01001}} {{ZBL|0191.46702}} {{ZBL|0121.25101}} {{ZBL|0117.25104}} {{ZBL|0102.34402}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|K}}|| A.N. Kolmogorov, "Probability theory" , ''Mathematics in the USSR during 40 years: 1917–1957'' , '''1''' , Moscow (1959) (In Russian) {{MR|2740683}} {{MR|2068844}} {{MR|2101342}} {{MR|2014969}} {{MR|1751481}} {{MR|1542526}} {{MR|0993961}} {{MR|0861120}} {{MR|0779090}} {{MR|0735967}} {{MR|0353394}} {{MR|0314554}} {{MR|0242569}} {{MR|0243559}} {{MR|0158418}} {{MR|0152411}} {{MR|0131348}} {{MR|0043408}} {{ZBL|}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|K2}}|| A.N. Kolmogorov, "Foundations of the theory of probability" , Chelsea, reprint (1950) (Translated from Russian) {{MR|0032961}} {{ZBL|}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|Pr}}|| Yu.V. [Yu.V. Prokhorov] Prohorov, "Probability theory" , Springer (1969) (Translated from Russian) {{MR|0251754}} {{ZBL|0939.00029}} | ||

| + | |} | ||

====Comments==== | ====Comments==== | ||

| Line 214: | Line 244: | ||

====References==== | ====References==== | ||

| − | + | {| | |

| + | |valign="top"|{{Ref|Bi}}|| P. Billingsley, "Probability and measure" , Wiley (1979) {{MR|0534323}} {{ZBL|0411.60001}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|Br}}|| L.P. Breiman, "Probability" , Addison-Wesley (1968) {{MR|0229267}} {{ZBL|0174.48801}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|CT}}|| Y.S. Chow, H. Tercher, "Probability theory. Independence, interchangeability, martingales" , Springer (1978) {{MR|0513230}} {{ZBL|0399.60001}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|Lo}}|| M. Loève, "Probability theory" , '''1–2''' , Springer (1977) {{MR|0651017}} {{MR|0651018}} {{ZBL|0359.60001}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|Ca}}|| R. Carnap, "The logical foundations of probability" , Univ. Chicago Press (1962) {{MR|184839}} {{ZBL|0044.00107}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|Fi}}|| B. de Finetti, "Theory of probability" , '''1–2''' , Wiley (1974) {{MR|}} {{ZBL|0328.60002}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|Ba}}|| H. Bauer, "Probability theory and elements of measure theory" , Holt, Rinehart & Winston (1972) pp. Chapt. 11 (Translated from German) {{MR|0636091}} {{ZBL|0243.60004}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|LS}}|| R.Sh. Liptser, A.N. Shiryaev, "Theory of martingales" , Kluwer (1989) (Translated from Russian) {{MR|1022664}} {{ZBL|0728.60048}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|LS2}}|| R.S. Liptser, A.N. Shiryaev, "Statistics of random processes" , '''1–2''' , Springer (1977–1978) (Translated from Russian) {{MR|1800858}} {{MR|1800857}} {{MR|0608221}} {{MR|0488267}} {{MR|0474486}} {{ZBL|1008.62073}} {{ZBL|1008.62072}} {{ZBL|0556.60003}} {{ZBL|0369.60001}} {{ZBL|0364.60004}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|PR}}|| V. Paulaskas, A. Račkauskas, "Approximation theory in the central limit theorem. Exact results in Banach spaces" , Kluwer (1989) (Translated from Russian) | ||

| + | |- | ||

| + | |valign="top"|{{Ref|GS}}|| I.I. Gihman, A.V. Skorohod, "The theory of stochastic processes" , '''I-III''' , Springer (1974–1979) (Translated from Russian) {{MR|0636254}} {{MR|0651015}} {{MR|0375463}} {{MR|0346882}} {{ZBL|0531.60002}} {{ZBL|0531.60001}} {{ZBL|0404.60061}} {{ZBL|0305.60027}} {{ZBL|0291.60019}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|D}}|| E.B. Dynkin, "Markov processes" , '''1–2''' , Springer (1965) (Translated from Russian) {{MR|0193671}} {{ZBL|0132.37901}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|W}}|| A.D. Wentzell, "Limit theorems on large deviations for Markov stochastic processes" , Kluwer (1990) (Translated from Russian) {{MR|1135113}} {{ZBL|0743.60029}} | ||

| + | |- | ||

| + | |valign="top"|{{Ref|S}}|| A.V. Skorohod, "Random processes with independent increments" , Kluwer (1991) (Translated from Russian) {{MR|1155400}} {{ZBL|}} | ||

| + | |} | ||

Revision as of 18:42, 27 May 2012

2020 Mathematics Subject Classification: Primary: 60-XX [MSN][ZBL]

A mathematical science in which the probabilities (cf. Probability) of certain random events are used to deduce the probabilities of other random events which are connected with the former events in some manner.

A statement to the effect that the probability of occurrence of a certain event is, say, 1/2, is not in itself valuable, since one is interested in reliable knowledge. Only results which state that the probability of occurrence of a certain event  is quite near to one or (which is the same thing) that the probability of the event not occurring is very small, represent ultimately valuable information. In accordance with the principle of "discarding sufficiently small probabilities" , such an event is considered to be practically certain. It will be shown below (cf. the section: Limit theorems) that conclusions of scientific and practical interest are usually based on the assumption that the occurrence or non-occurrence of an event

is quite near to one or (which is the same thing) that the probability of the event not occurring is very small, represent ultimately valuable information. In accordance with the principle of "discarding sufficiently small probabilities" , such an event is considered to be practically certain. It will be shown below (cf. the section: Limit theorems) that conclusions of scientific and practical interest are usually based on the assumption that the occurrence or non-occurrence of an event  depends on a large number of random factors, which are interconnected only to a minor extent (cf. Law of large numbers in connection with this subject). It may also be said, accordingly, that probability theory is the mathematical science of the laws governing the interaction of a large number of random factors.

depends on a large number of random factors, which are interconnected only to a minor extent (cf. Law of large numbers in connection with this subject). It may also be said, accordingly, that probability theory is the mathematical science of the laws governing the interaction of a large number of random factors.

The subject matter of probability theory.

In order to describe a regular connection between certain conditions  and an event

and an event  , the occurrence or non-occurrence of which can be established exactly, one of the following two schemes are usually employed in science.

, the occurrence or non-occurrence of which can be established exactly, one of the following two schemes are usually employed in science.

1) The occurrence of event  follows each realization of the conditions

follows each realization of the conditions  . This is the form of, say, all the laws of classical mechanics which state that under given initial conditions and forces acting on a body or a system of bodies, the motion will proceed in a uniquely determined manner.

. This is the form of, say, all the laws of classical mechanics which state that under given initial conditions and forces acting on a body or a system of bodies, the motion will proceed in a uniquely determined manner.

2) Under the conditions  the occurrence of event

the occurrence of event  has a definite probability

has a definite probability  which is equal to

which is equal to  . For instance, the laws governing ionizing radiation say that, for each radioactive substance there is a definite probability that, in a given period of time, some number

. For instance, the laws governing ionizing radiation say that, for each radioactive substance there is a definite probability that, in a given period of time, some number  of the atoms of the substance will decay.

of the atoms of the substance will decay.

The frequency of occurrence of event  in a given sequence of

in a given sequence of  trials (i.e.

trials (i.e.  repeated realizations of the conditions

repeated realizations of the conditions  ) is the ratio

) is the ratio  between the number

between the number  of trials in which

of trials in which  has occurred to the total number of trials

has occurred to the total number of trials  . That there is in fact a definite probability

. That there is in fact a definite probability  for

for  to occur, under the conditions

to occur, under the conditions  , is manifested by the fact that in almost-all sufficiently large sequences of trials the frequency of occurrence of

, is manifested by the fact that in almost-all sufficiently large sequences of trials the frequency of occurrence of  is approximately equal to

is approximately equal to  . Any mathematical model which is intended to be a schematic description of the connection between conditions

. Any mathematical model which is intended to be a schematic description of the connection between conditions  and a random event

and a random event  , usually also contains certain assumptions about the nature and the degree of dependence of the trials. After these additional assumptions (of which the most frequent one is mutual independence of the trials; see the section: Fundamental concepts in probability theory) have been made, it is possible to give a quantitative, more precise expression of the somewhat vague statement made above to the effect that the frequency is close to the probability.

, usually also contains certain assumptions about the nature and the degree of dependence of the trials. After these additional assumptions (of which the most frequent one is mutual independence of the trials; see the section: Fundamental concepts in probability theory) have been made, it is possible to give a quantitative, more precise expression of the somewhat vague statement made above to the effect that the frequency is close to the probability.

Statistical relationships, i.e. relationships which may be described by a scheme of type 2) above, were first noted for games of chance such as throwing a die. Statistical relationships concerning births and deaths have been known for a very long time (e.g. the probability of a newborn (human) baby being a boy is 0.515). The end of the 19th century and the first half of the 20th century have witnessed the discovery of a large number of statistical laws in physics, chemistry, biology, and other sciences. It should be noted that statistical laws are also involved in schemes not directly related to the concept of randomness, e.g. in the distribution of digits in tables of functions, etc. (cf. Random and pseudo-random numbers). This fact is utilized, in particular, in the "simulation" of random phenomena (see Statistical experiments, method of).

That methods of probability theory can be used in studying the relationships prevailing in a large number of sciences apparently unrelated to each other is due to the fact that probabilities of occurrence of events invariably satisfy certain simple laws, which will be discussed below (cf. the section: Fundamental concepts in probability theory). The study of the properties of the probability of occurrence of events, based on these simple laws, forms the subject matter of probability theory.

Fundamental concepts in probability theory.

The fundamental concepts in probability theory, as a mathematical discipline, are most simply exemplified within the framework of so-called elementary probability theory. Each trial  considered in elementary probability theory is such that it yields one and only one outcome or, as it is called, one of the elementary events

considered in elementary probability theory is such that it yields one and only one outcome or, as it is called, one of the elementary events  , which are supposed to be finite in number. To each outcome

, which are supposed to be finite in number. To each outcome  a non-negative number

a non-negative number  is connected — the probability of this outcome. The sum of the numbers

is connected — the probability of this outcome. The sum of the numbers  must be one. Consider events

must be one. Consider events  which are characterized by the condition

which are characterized by the condition

"either wi or wj… or wk occurs."

The outcomes  are said to be favourable to

are said to be favourable to  and, by definition, one says that the probability

and, by definition, one says that the probability  of

of  is equal to the sum of the probabilities of the outcomes favourable to this event:

is equal to the sum of the probabilities of the outcomes favourable to this event:

| (1) |

If there are  outcomes favourable to

outcomes favourable to  , then the special case

, then the special case  yields the formula

yields the formula

| (2) |

Formula (2) expresses the so-called classical concept of probability, according to which the probability of some event  is equal to the ratio between the number

is equal to the ratio between the number  of outcomes favourable to

of outcomes favourable to  and the number

and the number  of all "equally probable" outcomes. The computation of probabilities is thus reduced to counting the number of outcomes favourable to

of all "equally probable" outcomes. The computation of probabilities is thus reduced to counting the number of outcomes favourable to  and often proves to be a difficult problem in combinatorics.

and often proves to be a difficult problem in combinatorics.

Example. Each one of the 36 possible outcomes of throwing a pair of dice may be denoted by  , where

, where  is the number of dots shown by the first die, while

is the number of dots shown by the first die, while  is the number of dots shown by the second. Event

is the number of dots shown by the second. Event  — "the sum of the dots is 4" — is favoured by three outcomes:

— "the sum of the dots is 4" — is favoured by three outcomes:  ,

,  ,

,  . Thus,

. Thus,  .

.

The problem of determining the numerical values of the probabilities  in a given specific problem lies, strictly speaking, outside the scope of probability theory as a discipline of pure mathematics. In some cases these values are established as a result of processing the results of a large number of observations. In other cases it is possible to predict the probabilities of encountering given events in a given trial theoretically. Such a prediction is frequently based on an objective symmetry of the connections between the conditions under which the trial is conducted and the outcomes of the trials, and in such cases leads to a formula like (2). Let, for instance, the trial consist in throwing a die in the form of a cube made of a homogeneous material. One may then assume that each side of the die has a probability of 1/6 of "coming out" . In this case the assumption that all outcomes are equally probable is confirmed by experiment. Examples of this kind in fact form the basis of the classical definition of a probability.

in a given specific problem lies, strictly speaking, outside the scope of probability theory as a discipline of pure mathematics. In some cases these values are established as a result of processing the results of a large number of observations. In other cases it is possible to predict the probabilities of encountering given events in a given trial theoretically. Such a prediction is frequently based on an objective symmetry of the connections between the conditions under which the trial is conducted and the outcomes of the trials, and in such cases leads to a formula like (2). Let, for instance, the trial consist in throwing a die in the form of a cube made of a homogeneous material. One may then assume that each side of the die has a probability of 1/6 of "coming out" . In this case the assumption that all outcomes are equally probable is confirmed by experiment. Examples of this kind in fact form the basis of the classical definition of a probability.

A more detailed and thorough explanation for the causes of equal probabilities of individual outcomes in some special cases may be given by the so-called method of arbitrary functions. The method is explained below by taking again dice throwing as an example. Let the conditions of the trials be such that accidental effects of air on the die are negligible. In such a case, if the initial position, the initial velocity and the mechanical properties of the die are known exactly, the motion of the die may be calculated by the methods of classical mechanics, and the result of the trial may be reliably predicted. In practice, the initial conditions can never be determined with absolute accuracy and even very small changes in the initial velocity will produce a different result, provided the period of time  between the throw and the fall of the die is sufficiently long. It has been found that, under very general assumptions with respect to the probability distribution of the initial values (hence the name of the method), the probability of each one of the six possible outcomes tends to 1/6 as

between the throw and the fall of the die is sufficiently long. It has been found that, under very general assumptions with respect to the probability distribution of the initial values (hence the name of the method), the probability of each one of the six possible outcomes tends to 1/6 as  .

.

A second  consists of the shuffling of a pack of cards in order to ensure that all possible distributions are equally probable. Here, the transition from one distribution of the cards to the next as a result of two successive shuffles is usually random. The tendency to equi-probability is established by methods of the theory of Markov chains (cf. Markov chain).

consists of the shuffling of a pack of cards in order to ensure that all possible distributions are equally probable. Here, the transition from one distribution of the cards to the next as a result of two successive shuffles is usually random. The tendency to equi-probability is established by methods of the theory of Markov chains (cf. Markov chain).

Both these cases can be seen as part of general ergodic theory.

Given a certain number of events, two new events may be defined: their union (sum) and combination (product, intersection). The event  : "at least one of A1…Ar occurs" , is said to be the union of events

: "at least one of A1…Ar occurs" , is said to be the union of events  .

.

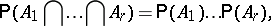

The event  : "A1… and Ar occur" , is said to be the combination or intersection of events

: "A1… and Ar occur" , is said to be the combination or intersection of events  .

.

The symbols for union and intersection of events are  and

and  , respectively. Thus:

, respectively. Thus:

|

Two events  and

and  are said to be mutually exclusive if their joint occurrence is impossible, i.e. if none of the possible results of a trial favours both

are said to be mutually exclusive if their joint occurrence is impossible, i.e. if none of the possible results of a trial favours both  and

and  . If the events

. If the events  are identified with the sets of their favourable outcomes, events

are identified with the sets of their favourable outcomes, events  and

and  will be identical with the union and the intersection of the respective sets.

will be identical with the union and the intersection of the respective sets.

Two fundamental theorems in probability theory — theorems on addition and multiplication of probabilities — are connected with the operations just introduced.

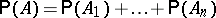

The theorem on addition of probabilities. If the events  are such that any two of them are mutually exclusive, the probability of their union is equal to the sum of their probabilities.

are such that any two of them are mutually exclusive, the probability of their union is equal to the sum of their probabilities.

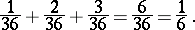

Thus, in the example mentioned above — throwing a pair of dice, "the sum of the dots is 4 or less" is the sum of the three mutually exclusive events  in which the sum of the dots is 2, 3 and 4, respectively. The probabilities of these events are 1/36, 2/36 and 3/36, respectively; in accordance with the addition theorem,

in which the sum of the dots is 2, 3 and 4, respectively. The probabilities of these events are 1/36, 2/36 and 3/36, respectively; in accordance with the addition theorem,  is equal to

is equal to

|

The conditional probability of event  occurring if condition

occurring if condition  is met is defined by the formula

is met is defined by the formula

|

which may be shown to be in complete agreement with the properties of the frequencies of occurrence. Events  are said to be independent if the conditional probability of any one of the events occurring under the condition that some of the other events have also occurred is equal to its "unconditional" probability (see also Independence in probability theory).

are said to be independent if the conditional probability of any one of the events occurring under the condition that some of the other events have also occurred is equal to its "unconditional" probability (see also Independence in probability theory).

The theorem on multiplication of probabilities. The probability of joint occurrence of events  is equal to the probability of occurrence of event

is equal to the probability of occurrence of event  multiplied by the probability of occurrence of event

multiplied by the probability of occurrence of event  on the condition that

on the condition that  has in fact occurred

has in fact occurred multiplied by the probability of occurrence of event

multiplied by the probability of occurrence of event  on the condition that the events

on the condition that the events  have in fact occurred. If the events are independent, the multiplication theorem yields the formula

have in fact occurred. If the events are independent, the multiplication theorem yields the formula

| (3) |

i.e. the probability of joint occurrence of independent events is equal to the product of the probabilities of these events. Formula (3) remains valid if some of the events are replaced in both its parts by the complementary events.

Example. Four shots are fired at a target, the probability of hitting the target being 0.2 with each shot. The hits scored in different shots are considered to be independent events. What will be the probability of hitting the target exactly three times?

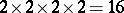

Each outcome of a trial can be symbolized by a sequence of four letters (e.g.  means that the first and fourth shots were hits, while the second and the third shots were misses). The total number of outcomes will be

means that the first and fourth shots were hits, while the second and the third shots were misses). The total number of outcomes will be  . Since the results of individual shots are assumed to be independent, the probability of the outcomes must be determined with the aid of formula (3) including the comment which accompanies it. Thus, the probability of the outcome

. Since the results of individual shots are assumed to be independent, the probability of the outcomes must be determined with the aid of formula (3) including the comment which accompanies it. Thus, the probability of the outcome  will be

will be

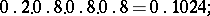

|

where  is the probability of miss in a single shot. The outcomes favouring the event "the target is hit three times" are

is the probability of miss in a single shot. The outcomes favouring the event "the target is hit three times" are  ,

,  ,

,  , and

, and  . The probabilities of all four outcomes are equal:

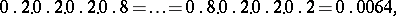

. The probabilities of all four outcomes are equal:

|

so that the probability of the event is

|

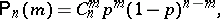

A generalization of the above reasoning leads to one of the fundamental formulas in probability theory: If the events  are independent and if the probability of each individual event occurring is

are independent and if the probability of each individual event occurring is  , then the probability of occurrence of exactly

, then the probability of occurrence of exactly  such events is

such events is

| (4) |

where  denotes the number of combinations of

denotes the number of combinations of  elements out of

elements out of  elements (see Binomial distribution). If

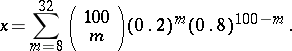

elements (see Binomial distribution). If  is large, computations according to formula (4) become laborious. In the above example, let the number of shots be 100; one has to find the probability

is large, computations according to formula (4) become laborious. In the above example, let the number of shots be 100; one has to find the probability  of the number of hits being between 8 and 32. The use of formula (4) and of the addition theorem yields an accurate, but unwieldy expression for the probability value sought, namely:

of the number of hits being between 8 and 32. The use of formula (4) and of the addition theorem yields an accurate, but unwieldy expression for the probability value sought, namely:

|

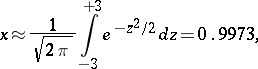

An approximate value of the probability  may be found by the use of the Laplace theorem:

may be found by the use of the Laplace theorem:

|

the error not exceeding 0.0009. This result shows that the occurrence of the event  is practically certain. This is a very simple, but typical, example of the use of limit theorems in probability theory.

is practically certain. This is a very simple, but typical, example of the use of limit theorems in probability theory.

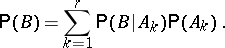

Another fundamental formula in elementary probability theory is the so-called formula of total probability: If events  are pairwise mutually exclusive and if their union is the sure event, the probability of any single event

are pairwise mutually exclusive and if their union is the sure event, the probability of any single event  is equal to the sum

is equal to the sum

|

The theorem on multiplication of probabilities is particularly useful when compound trials are considered. One says that a trial  is composed of trials

is composed of trials  if each outcome of

if each outcome of  is a combination of certain outcomes

is a combination of certain outcomes  of the respective trials

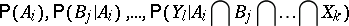

of the respective trials  . Frequently one is in the situation where the probabilities

. Frequently one is in the situation where the probabilities

| (5) |

are, for some reason, known. The data in (5) together with the multiplication theorem may then be used to determine the probabilities  for all outcomes

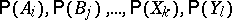

for all outcomes  of the compound trial, as well as the probabilities of all events connected with this trial (as was done in the example discussed above). Two types of compound trials are especially important in practice: A) the individual trials are independent, i.e. the probabilities in (5) are equal to the unconditional probabilities

of the compound trial, as well as the probabilities of all events connected with this trial (as was done in the example discussed above). Two types of compound trials are especially important in practice: A) the individual trials are independent, i.e. the probabilities in (5) are equal to the unconditional probabilities  ; B) the probabilities of the outcomes of a given trial are only affected by the outcomes of the immediately preceding trial, i.e. the probabilities in (5) are equal, respectively, to

; B) the probabilities of the outcomes of a given trial are only affected by the outcomes of the immediately preceding trial, i.e. the probabilities in (5) are equal, respectively, to  . One then says that the trials are connected in a Markov chain. The probabilities of all events connected with a compound trial are here fully determined by the initial probabilities

. One then says that the trials are connected in a Markov chain. The probabilities of all events connected with a compound trial are here fully determined by the initial probabilities  and by the intermediate probabilities

and by the intermediate probabilities  (cf. Markov process).

(cf. Markov process).

Random variables. If each outcome of a trial  is put into correspondence with a number

is put into correspondence with a number  , one says that a random variable

, one says that a random variable  has been specified. Among the numbers

has been specified. Among the numbers  there may be equals; the set of different values of

there may be equals; the set of different values of  , where

, where  , is the set of possible values of the random variable. The set of possible values of a random variable, together with their respective probabilities is said to be the probability distribution of the random variable. Thus, in the example of throwing a pair of dice, to each outcome

, is the set of possible values of the random variable. The set of possible values of a random variable, together with their respective probabilities is said to be the probability distribution of the random variable. Thus, in the example of throwing a pair of dice, to each outcome  of the trial there corresponds the value of the random variable

of the trial there corresponds the value of the random variable  which is the sum of the dots on the two dice. The possible values are

which is the sum of the dots on the two dice. The possible values are  and their respective probabilities are

and their respective probabilities are  .

.

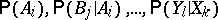

In a joint study of several random variables one introduces the concept of their joint distribution, which is defined by indicating the possible values of each one, and the probabilities of joint occurrence of the events

| (6) |

where  is one of the possible values of the variable

is one of the possible values of the variable  . Random variables are said to be independent if the events in (6) are independent whatever the choice of the

. Random variables are said to be independent if the events in (6) are independent whatever the choice of the  . The joint distribution of random variables can be used to calculate the probability of any event defined by these variables, e.g. of the event

. The joint distribution of random variables can be used to calculate the probability of any event defined by these variables, e.g. of the event

|

etc.

Often, instead of giving the distribution of a random variable completely, one uses a, not too large, collection of numerical characteristics. The ones most often used are the mathematical expectation and the dispersion (variance). (See also Moment; Semi-invariant.)

The fundamental characteristics of a joint distribution of several random variables include — in addition to the mathematical expectations and the variances of these variables — also the correlation coefficients (cf. Correlation coefficient), etc. The meaning of these characteristics can be made clear, to a considerable extent, by limit theorems (see the section: Limit theorems).

The scheme of trials with a finite number of outcomes proves inadequate even in the simplest applications of probability theory. Thus, in the study of the random dispersion of the hitting sites of projectiles around the centre of a target, or in the study of random errors in the determination of some value, etc., it is not possible to limit the model to trials with a finite number of outcomes. Moreover, such outcomes may, in some cases, be expressed by a number or a set of numbers, while in other cases the outcome of a trial may be a function (e.g. a record of the variation of atmospheric pressure at a given location over a certain period of time), a set of functions, etc. It should be noted that many definitions and theorems given above, after suitable modifications, are also applicable in these more general cases, although the forms in which the probability distribution is presented are different (cf. Density of a probability distribution; Probability distribution). Here, the classical "equal probability of each outcome" is replaced by a uniform distribution of the objects under consideration in some area (this is exactly what is meant when speaking of a point randomly selected in a given area, a randomly selected tangent to some figure, etc.).

Major changes are introduced in the definition of a probability which, in the elementary case, is given by formula (2). In the more general schemes now discussed, the events are the union of an infinite number of elementary events the probability of each one of which may be zero. Thus, the property which is described by the addition theorem is not a consequence of the definition of probability, but is part of it.

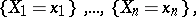

The logical scheme of constructing the fundamentals of probability theory which is most often employed was developed in 1933 by A.N. Kolmogorov. The fundamental characteristics of this scheme are the following. In studying a real problem by the methods of probability theory, the first step is to isolate a set  of elements

of elements  , called elementary events. Any event can be fully described by the set of elementary events favourable to it, and is therefore considered as some set of elementary events. To some events

, called elementary events. Any event can be fully described by the set of elementary events favourable to it, and is therefore considered as some set of elementary events. To some events  are assigned certain numbers

are assigned certain numbers  , which are called their probabilities and which satisfy the following conditions:

, which are called their probabilities and which satisfy the following conditions:

1)  ;

;

2)  ;

;

3) if the events  are pairwise mutually exclusive, and if

are pairwise mutually exclusive, and if  is their union, then

is their union, then

|

(additivity of probabilities).

In order to construct a mathematically rigorous theory, the domain of definition of  must be a

must be a  -algebra, and condition (3) must also be met for an infinite sequence of events which are mutually exclusive (countable additivity of probabilities). Non-negativity and countable additivity are fundamental properties of measures. Thus, probability theory may be formally regarded as a part of measure theory. The fundamental concepts of probability theory are then viewed in a new light: random variables become measurable functions, their mathematical expectations become the abstract integrals of Lebesgue, etc. However, the main problems of probability theory and of measure theory are different. In probability theory, the basic, specific concept is that of independence of events, trials and random variables. Moreover, probability theory comprises a thorough study of subjects such as probability distributions, conditional mathematical expectations, etc.

-algebra, and condition (3) must also be met for an infinite sequence of events which are mutually exclusive (countable additivity of probabilities). Non-negativity and countable additivity are fundamental properties of measures. Thus, probability theory may be formally regarded as a part of measure theory. The fundamental concepts of probability theory are then viewed in a new light: random variables become measurable functions, their mathematical expectations become the abstract integrals of Lebesgue, etc. However, the main problems of probability theory and of measure theory are different. In probability theory, the basic, specific concept is that of independence of events, trials and random variables. Moreover, probability theory comprises a thorough study of subjects such as probability distributions, conditional mathematical expectations, etc.

The following comments may be made on the scheme described above. In accordance with the scheme, each probability model is based on a probability space, which is a triplet  , where

, where  is a set of elementary events,

is a set of elementary events,  is a

is a  -algebra of subsets of

-algebra of subsets of  and

and  is a probability distribution (a countably-additive normalized measure) on

is a probability distribution (a countably-additive normalized measure) on  . Two achievements of this scheme are the definition of probabilities in infinite-dimensional spaces (in particular, in spaces connected with infinite sequences of trials and stochastic processes), and the general definition of conditional probabilities and conditional mathematical expectations (with respect to a given random variable, etc.).

. Two achievements of this scheme are the definition of probabilities in infinite-dimensional spaces (in particular, in spaces connected with infinite sequences of trials and stochastic processes), and the general definition of conditional probabilities and conditional mathematical expectations (with respect to a given random variable, etc.).

Subsequent development of probability theory showed that the above definition of a probability space can be expediently narrowed. These developments have led to concepts such as perfect distributions and probability spaces, Blackwell spaces, Radon probability measures on topological (linear) spaces, etc. (see Probability distribution).

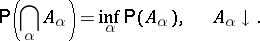

There are also other approaches to the fundamental concepts of probability theory, such as axiomatization, the principal object of which is a normalized Boolean algebra of events. Here, the principal advantage (provided that the algebra being considered is complete in the metric sense) consists of the fact that for any directed system of events the following relations are true:

|

|

It is possible to axiomatize the concept of a random variable as an element of some commutative algebra with a positive linear functional defined on it (the analogue of the mathematical expectation). This is the starting point for non-commutative and quantum probability.

Limit theorems.

In a formal exposition of probability theory limit theorems appear as a kind of superstructure over its elementary sections in which all problems are of a finite, purely arithmetical nature. However, the cognitive value of probability theory can only be revealed by these limit theorems. Thus, it is shown by the Bernoulli theorem that the frequency of occurrence of a given event in independent trials is usually close to its probability, while the Laplace theorem yields the probabilities of deviations of this frequency from its limiting value. In a similar manner, the meaning of the characteristics of a random variable such as its mathematical expectation and variance are explained by the law of large numbers and the central limit theorem (see also Limit theorems in probability theory).

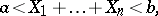

Let

| (7) |

be independent random variables with the same probability distribution, with  ,

,  , and let

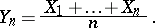

, and let  be the arithmetical average of the first

be the arithmetical average of the first  variables of the sequence (7):

variables of the sequence (7):

|

In accordance with the law of large numbers, for any  the probability of the inequality

the probability of the inequality  tends to one as

tends to one as  , so that, as a rule, the value of

, so that, as a rule, the value of  is close to

is close to  . This result is rendered more precise by the central limit theorem, according to which the deviations of

. This result is rendered more precise by the central limit theorem, according to which the deviations of  from

from  are approximately normally distributed, with mathematical expectation 0 and variance

are approximately normally distributed, with mathematical expectation 0 and variance  . Thus, in order to calculate (to a first approximation) the probability of some deviation of

. Thus, in order to calculate (to a first approximation) the probability of some deviation of  from

from  for large

for large  , there is no need to know the distribution of the variables

, there is no need to know the distribution of the variables  in all details; knowledge of their variance is sufficient. If a higher accuracy of approximation is required, moments of higher order must also be used.

in all details; knowledge of their variance is sufficient. If a higher accuracy of approximation is required, moments of higher order must also be used.

The above statements, with suitable modifications, may be extended to random vectors (in finite-dimensional and in some infinite-dimensional spaces). The independence conditions may be replaced by conditions of a "weak" (in some sense) dependence of the  . Limit theorems of distributions on groups, of distributions of values of arithmetic functions, etc., are also known.

. Limit theorems of distributions on groups, of distributions of values of arithmetic functions, etc., are also known.

In applications — in particular, in mathematical statistics and statistical physics — it may be necessary to approximate small probabilities (i.e. probabilities of events of the type  ) with a high relative accuracy. This involves major corrections to the normal approximation (cf. Probability of large deviations).

) with a high relative accuracy. This involves major corrections to the normal approximation (cf. Probability of large deviations).

It was noted in the nineteen twenties that quite natural non-normal limit distributions may appear even in schemes of sequences of uniformly-distributed and independent random variables. For instance, let  be the time which elapses until some randomly varying variable has returned to its initial location, let

be the time which elapses until some randomly varying variable has returned to its initial location, let  be the time between the first and the second such returns, etc. Then, under very general conditions, the distribution of the sum

be the time between the first and the second such returns, etc. Then, under very general conditions, the distribution of the sum  (i.e. the time elapsing prior to the

(i.e. the time elapsing prior to the  -th return) will, after multiplication by

-th return) will, after multiplication by  (where

(where  is a constant smaller than one), converge to some limit distribution. Thus, the time prior to the

is a constant smaller than one), converge to some limit distribution. Thus, the time prior to the  -th return increases, roughly speaking, in proportion to

-th return increases, roughly speaking, in proportion to  , i.e. at a faster rate than

, i.e. at a faster rate than  (if the law of large numbers were applicable, it would be of order

(if the law of large numbers were applicable, it would be of order  ). This is seen in the case of a Bernoulli random walk (in which another paradoxical law — the arcsine law — also appears).

). This is seen in the case of a Bernoulli random walk (in which another paradoxical law — the arcsine law — also appears).

The principal method of proof of limit theorems is the method of characteristic functions (cf. Characteristic function) (and the related methods of Laplace transforms and of generating functions). In a number of cases it becomes necessary to invoke the theory of functions of a complex variable.

The mechanism of the existence of most limit relationships can be completely understood only in the context of the theory of stochastic processes.

Stochastic processes.

During the past few decades the need to consider stochastic processes (cf. Stochastic process) — i.e. processes with a given probability of their proceeding in a certain manner, arose in certain physical and chemical investigations, along with the study of one-dimensional and higher-dimensional random variables. The coordinate of a particle executing a Brownian motion may serve as an example of a stochastic process. In probability theory a stochastic process is usually regarded as a one-parameter family of random variables  . In most applications the parameter

. In most applications the parameter  is time, but it may also be an arbitrary variable, and in such cases it is usual to speak of a random function (if

is time, but it may also be an arbitrary variable, and in such cases it is usual to speak of a random function (if  is a point in space — a random field). If the parameter

is a point in space — a random field). If the parameter  runs through integer values, the random function is said to be a random sequence (or a time series). While a random variable may be characterized by a distribution law, a stochastic process may be characterized by the totality of joint distribution laws for

runs through integer values, the random function is said to be a random sequence (or a time series). While a random variable may be characterized by a distribution law, a stochastic process may be characterized by the totality of joint distribution laws for  for all possible moments of time

for all possible moments of time  for any

for any  (the so-called finite-dimensional distributions). The most interesting concrete results in the theory of stochastic processes were obtained in two fields — Markov processes and stationary stochastic processes (cf. Markov process; Stationary stochastic process); the interest in martingales (cf. Martingale) is now also strongly increasing.

(the so-called finite-dimensional distributions). The most interesting concrete results in the theory of stochastic processes were obtained in two fields — Markov processes and stationary stochastic processes (cf. Markov process; Stationary stochastic process); the interest in martingales (cf. Martingale) is now also strongly increasing.

Chronologically, Markov processes were the first to be studied. A stochastic process  is said to be a Markov process if, for any two moments of time

is said to be a Markov process if, for any two moments of time  and

and  (

( ), the conditional probability distribution of

), the conditional probability distribution of  depends, provided all values of

depends, provided all values of  for

for  are given, only on

are given, only on  . For this reason Markov processes are sometimes referred to as processes without after-effect. Markov processes are a natural extension of the deterministic processes studied in classical physics. In deterministic processes the state of the system at the moment of time

. For this reason Markov processes are sometimes referred to as processes without after-effect. Markov processes are a natural extension of the deterministic processes studied in classical physics. In deterministic processes the state of the system at the moment of time  uniquely determines the course of the process in the future; in Markov processes the state of the system at the moment of time

uniquely determines the course of the process in the future; in Markov processes the state of the system at the moment of time  uniquely determines the probability distribution of the course of the process at

uniquely determines the probability distribution of the course of the process at  , and this distribution cannot be altered by any information on the course of the process prior to the moment of time

, and this distribution cannot be altered by any information on the course of the process prior to the moment of time  .

.

Just as the study of continuous deterministic processes is reduced to differential equations involving functions which describe the state of the system, the study of continuous Markov processes can, to a large extent, be reduced to differential or differential-integral equations with respect to the distribution of the probabilities of the process.

Another major subject in the field of stochastic processes is the theory of stationary stochastic processes. The stationary nature of a process, i.e. the fact that its probability relations remain unchanged with time, imposes major restrictions on the process and makes it possible to arrive at several important deductions based on this premise.

A major part of the theory is based only on the assumption of stationarity in a wide sense, viz. that the mathematical expectations  and

and  are independent of

are independent of  . This assumption leads to the so-called spectral decomposition:

. This assumption leads to the so-called spectral decomposition:

|

where  is a random function with uncorrelated increments. Methods of best (in the mean square) linear interpolation, extrapolation and filtering have been developed for stationary processes.

is a random function with uncorrelated increments. Methods of best (in the mean square) linear interpolation, extrapolation and filtering have been developed for stationary processes.

Recently a rather large class of processes, the so-called semi-martingales, which serves to solve problems of optimal non-linear filtering, interpolation and extrapolation, has been isolated (cf. Stochastic processes, prediction of; Stochastic processes, filtering of; Stochastic processes, interpolation of). A substantial part of the relevant analytical apparatus is provided by stochastic differential equations, stochastic integrals and martingales. A distinguishing feature of a martingale  is the fact that the conditional mathematical expectation of

is the fact that the conditional mathematical expectation of  is

is  , given the values of

, given the values of  for

for  ,

,  .

.

The theory of stochastic processes is closely connected with the classical problems on limit theorems for sums of random variables. Distributions which appear as limit distributions in the study of sums of random variables become exact distributions of appropriate characteristics in the theory of stochastic processes. This fact makes it possible to demonstrate many limit theorems with the aid of these associated stochastic processes.

One may finally note that the logically unobjectionable definition of the concepts connected with stochastic processes within the framework of the axiomatics discussed above has always presented and still presents a large number of difficulties of measure-theoretic nature. These are connected, for example, with the definition of probabilistic continuity, differentiability, etc., of stochastic processes (cf. Separable process). This is why monographs on the theory of stochastic processes devote about half their space to the analysis of the development of measure-theoretic constructions.

See also the references to entries on individual subjects of probability theory.

References

| [Brnl] | J. Bernoulli, "Ars conjectandi" , Basle (1713) MR2349550 MR2393219 MR0935946 MR0850992 MR0827905 Zbl 0957.01032 Zbl 0694.01020 Zbl 30.0210.01 |

| [Mo] | A. de Moivre, "Doctrine of chances" , Paris (1756) Zbl 0153.30801 |

| [La] | P.S. Laplace, "Théorie analytique des probabilités" , Paris (1812) MR2274728 MR1400403 MR1400402 Zbl 1047.01534 Zbl 1047.01533 |

| [Ch] | P.L. Chebyshev, "Oeuvres de P.L. Chebyshev", Chelsea, reprint (1961) |

| [Lia] | A.M. Liapounoff, "Nouvelle forme du théorème sur la limite de probabilité" , St. Petersburg (1901) |

| [Ma] | A.A. Markov, "Studies on a remarkable case of dependent trials" Izv. Akad. Nauk SSSR Ser. 6 , 1 (1907) (In Russian) |

| [Ma2] | A.A. Markov, "Wahrscheinlichkeitsrechung" , Teubner (1912) (Translated from Russian) Zbl 39.0292.02 |

| [Brnsh] | S.N. Bernshtein, "Probability theory" , Moscow-Leningrad (1946) (In Russian) MR1868030 |

| [G] | B.V. Gnedenko, "The theory of probability", Chelsea, reprint (1962) (Translated from Russian) |

| [Bo] | A.A. Borovkov, "Wahrscheinlichkeitstheorie" , Birkhäuser (1976) (Translated from Russian) MR0410818 |

| [Fe] | W. Feller, "An introduction to probability theory and its applications", 1–2 , Wiley (1957–1971) |

| [Po] | H. Poincaré, "Calcul des probabilités" , Gauthier-Villars (1912) MR0924852 MR1190693 Zbl 43.0308.04 |

| [Mi] | R. von Mises, "Wahrscheinlichkeitsrechnung und ihre Anwendung in der Statistik und theoretischen Physik" , Wien (1931) Zbl 0002.27701 Zbl 57.0605.14 |

| [GK] | B.V. Gnedenko, A.N. Kolmogorov, "Probability theory" , Mathematics in the USSR during thirty years: 1917–1947 , Moscow-Leningrad (1948) pp. 701–727 (In Russian) MR1791851 MR1666260 MR1785765 MR1185485 MR0993962 MR0993956 MR0918766 MR0884522 MR1032004 MR0767301 MR0707275 MR0694048 MR0443006 MR0532056 MR0277014 MR0158418 MR0154305 MR0152411 MR0177425 MR0139186 Zbl 05904374 Zbl 1181.01046 Zbl 0901.60001 Zbl 0917.60002 Zbl 0744.60001 Zbl 0683.60064 Zbl 0669.60082 Zbl 0645.60001 Zbl 0709.60001 Zbl 0658.60001 Zbl 0619.01014 Zbl 0543.60001 Zbl 0532.60001 Zbl 0523.60001 Zbl 0507.60024 Zbl 0523.01001 Zbl 0191.46702 Zbl 0121.25101 Zbl 0117.25104 Zbl 0102.34402 |

| [K] | A.N. Kolmogorov, "Probability theory" , Mathematics in the USSR during 40 years: 1917–1957 , 1 , Moscow (1959) (In Russian) MR2740683 MR2068844 MR2101342 MR2014969 MR1751481 MR1542526 MR0993961 MR0861120 MR0779090 MR0735967 MR0353394 MR0314554 MR0242569 MR0243559 MR0158418 MR0152411 MR0131348 MR0043408 |

| [K2] | A.N. Kolmogorov, "Foundations of the theory of probability" , Chelsea, reprint (1950) (Translated from Russian) MR0032961 |

| [Pr] | Yu.V. [Yu.V. Prokhorov] Prohorov, "Probability theory" , Springer (1969) (Translated from Russian) MR0251754 Zbl 0939.00029 |

Comments

References

| [Bi] | P. Billingsley, "Probability and measure" , Wiley (1979) MR0534323 Zbl 0411.60001 |

| [Br] | L.P. Breiman, "Probability" , Addison-Wesley (1968) MR0229267 Zbl 0174.48801 |

| [CT] | Y.S. Chow, H. Tercher, "Probability theory. Independence, interchangeability, martingales" , Springer (1978) MR0513230 Zbl 0399.60001 |

| [Lo] | M. Loève, "Probability theory" , 1–2 , Springer (1977) MR0651017 MR0651018 Zbl 0359.60001 |

| [Ca] | R. Carnap, "The logical foundations of probability" , Univ. Chicago Press (1962) MR184839 Zbl 0044.00107 |

| [Fi] | B. de Finetti, "Theory of probability" , 1–2 , Wiley (1974) Zbl 0328.60002 |

| [Ba] | H. Bauer, "Probability theory and elements of measure theory" , Holt, Rinehart & Winston (1972) pp. Chapt. 11 (Translated from German) MR0636091 Zbl 0243.60004 |

| [LS] | R.Sh. Liptser, A.N. Shiryaev, "Theory of martingales" , Kluwer (1989) (Translated from Russian) MR1022664 Zbl 0728.60048 |

| [LS2] | R.S. Liptser, A.N. Shiryaev, "Statistics of random processes" , 1–2 , Springer (1977–1978) (Translated from Russian) MR1800858 MR1800857 MR0608221 MR0488267 MR0474486 Zbl 1008.62073 Zbl 1008.62072 Zbl 0556.60003 Zbl 0369.60001 Zbl 0364.60004 |

| [PR] | V. Paulaskas, A. Račkauskas, "Approximation theory in the central limit theorem. Exact results in Banach spaces" , Kluwer (1989) (Translated from Russian) |

| [GS] | I.I. Gihman, A.V. Skorohod, "The theory of stochastic processes" , I-III , Springer (1974–1979) (Translated from Russian) MR0636254 MR0651015 MR0375463 MR0346882 Zbl 0531.60002 Zbl 0531.60001 Zbl 0404.60061 Zbl 0305.60027 Zbl 0291.60019 |

| [D] | E.B. Dynkin, "Markov processes" , 1–2 , Springer (1965) (Translated from Russian) MR0193671 Zbl 0132.37901 |

| [W] | A.D. Wentzell, "Limit theorems on large deviations for Markov stochastic processes" , Kluwer (1990) (Translated from Russian) MR1135113 Zbl 0743.60029 |

| [S] | A.V. Skorohod, "Random processes with independent increments" , Kluwer (1991) (Translated from Russian) MR1155400 |

Probability theory. Encyclopedia of Mathematics. URL: http://encyclopediaofmath.org/index.php?title=Probability_theory&oldid=25811