Difference between revisions of "Absorbing state"

(template MSC (not good for now)) |

(newer MSC template) |

||

| Line 1: | Line 1: | ||

''of a Markov chain <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/a/a010/a010430/a0104301.png" />'' | ''of a Markov chain <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/a/a010/a010430/a0104301.png" />'' | ||

| − | {{MSC|60J10|}} | + | {{User:Rehmann/sandbox/MSC|60J10|}} |

A state <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/a/a010/a010430/a0104302.png" /> such that | A state <img align="absmiddle" border="0" src="https://www.encyclopediaofmath.org/legacyimages/a/a010/a010430/a0104302.png" /> such that | ||

Revision as of 07:09, 7 January 2012

of a Markov chain

[ 2010 Mathematics Subject Classification MSN: 60J10 | MSCwiki: 60J10 ]

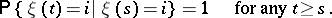

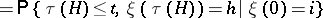

A state  such that

such that

|

An example of a Markov chain with absorbing state  is a branching process.

is a branching process.

The introduction of additional absorbing states is a convenient technique that enables one to examine the properties of trajectories of a Markov chain that are associated with hitting some set.

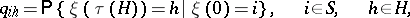

Example. Consider the set  of states of a homogeneous Markov chain

of states of a homogeneous Markov chain  with discrete time and transition probabilities

with discrete time and transition probabilities

|

in which a subset  is distinguished and suppose one has to find the probabilities

is distinguished and suppose one has to find the probabilities

|

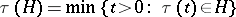

where  is the moment of first hitting the set

is the moment of first hitting the set  . If one introduces the auxiliary Markov chain

. If one introduces the auxiliary Markov chain  differing from

differing from  only in that all states

only in that all states  are absorbing in

are absorbing in  , then for

, then for  the probabilities

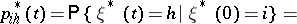

the probabilities

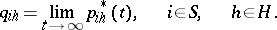

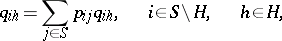

|

|

are monotonically non-decreasing for  and

and

| (*) |

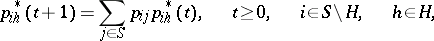

By virtue of the basic definition of a Markov chain

|

|

The passage to the limit for  taking into account (*) gives a system of linear equations for

taking into account (*) gives a system of linear equations for  :

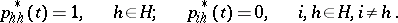

:

|

|

References

| [1] | W. Feller, "An introduction to probability theory and its applications" , 1 , Wiley (1968) |

Absorbing state. Encyclopedia of Mathematics. URL: http://encyclopediaofmath.org/index.php?title=Absorbing_state&oldid=20022