Error

The difference  , where

, where  is a given number that is considered as the approximate value of a certain quantity with exact value

is a given number that is considered as the approximate value of a certain quantity with exact value  . The difference

. The difference  is also called the absolute error. The ratio of

is also called the absolute error. The ratio of  to

to  is called the relative error of

is called the relative error of  . To characterize the error one usually states bounds on it. A number

. To characterize the error one usually states bounds on it. A number  such that

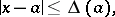

such that

|

is called a bound on the absolute error. A number  such that

such that

|

is called a bound on the relative error. A bound on the relative error is frequently expressed in percentages. The smallest possible numbers are taken as  and

and  .

.

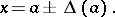

The information that the number  is the approximate value of

is the approximate value of  with a bound

with a bound  of the absolute error is usually stated as:

of the absolute error is usually stated as:

|

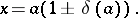

The analogous relation for the relative error is written as

|

The bounds on the absolute and relative errors indicate the maximum possible discrepancy between  and

and  . At the same time, one often uses error characteristics that incorporate the character of the error (for example, the error of a measurement) and the frequencies of different values of the difference between

. At the same time, one often uses error characteristics that incorporate the character of the error (for example, the error of a measurement) and the frequencies of different values of the difference between  and

and  . Probabilistic methods are used in the last approach (see Errors, theory of).

. Probabilistic methods are used in the last approach (see Errors, theory of).

In the numerical solution of a problem, the error in the result is due to inaccuracies occurring in the formulation and in the methods of solution. The error arising because of inaccuracy in the mathematical description of a real process is called the error of the model; that arising from inaccuracy in specifying the initial data is called the input-data error; that due to inaccuracy in the method of solution is called the methodological error; and that due to inaccuracy in the computations is called the computational error (rounding error). Sometimes the error of the model and the input-data error are combined under the name inherent error.

During the calculations, the initial errors are sequentially transferred from operation to operation, and they accumulate and generate new errors. Error occurrence and propagation in calculations is the subject of special researches (see Computational mathematics).

References

| [1] | I.S. Berezin, N.P. Zhidkov, "Computing methods" , Pergamon (1973) (Translated from Russian) |

| [2] | N.S. Bakhvalov, "Numerical methods: analysis, algebra, ordinary differential equations" , MIR (1977) (Translated from Russian) |

| [3] | V.V. Voevodin, "Numerical methods of algebra" , Moscow (1966) (In Russian) |

Comments

Apart from errors caused by the finite arithmetic, also discretization errors (when replacing a differential equation by a difference equation) or truncation errors (by making an infinite process, like a series, finite) are a major source of study in numerical analysis. See Difference methods.

References

| [a1] | J.H. Wilkinson, "Rounding errors in algebraic processes" , Prentice-Hall (1963) |

Error. Encyclopedia of Mathematics. URL: http://encyclopediaofmath.org/index.php?title=Error&oldid=17295