Approximation of functions, linear methods

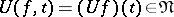

Approximation methods defined by linear operators. If in a linear normed function space  a linear manifold (linear subspace)

a linear manifold (linear subspace)  is chosen as approximating set, then any linear operator

is chosen as approximating set, then any linear operator  which transforms a function

which transforms a function  into a function

into a function  so that

so that

|

(where  and

and  are arbitrary numbers), defines a linear approximation method for functions in

are arbitrary numbers), defines a linear approximation method for functions in  by means of functions in

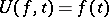

by means of functions in  . A linear approximation method is called projective if

. A linear approximation method is called projective if  for all

for all  in

in  ; it is called positive if

; it is called positive if  for non-negative functions

for non-negative functions  .

.

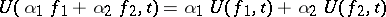

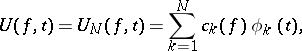

The finite-dimensional case is the most interesting one. Here  is an

is an  -dimensional subspace, and then

-dimensional subspace, and then

| (1) |

where  is a basis of

is a basis of  and

and  are certain linear functionals defined on

are certain linear functionals defined on  . The choice of the linearly independent system

. The choice of the linearly independent system  and the set of functionals

and the set of functionals  is determined by the information about the function that one intends to use while constructing the linear method. If

is determined by the information about the function that one intends to use while constructing the linear method. If  , where

, where  is a fixed system of points in the domain of definition of

is a fixed system of points in the domain of definition of  , and if

, and if  when

when  , while

, while  , then

, then  ,

,  , and one has an interpolation method (for instance, Lagrange's interpolation polynomial, or an interpolation spline). If

, and one has an interpolation method (for instance, Lagrange's interpolation polynomial, or an interpolation spline). If  is a Hilbert space of functions and

is a Hilbert space of functions and  are the Fourier coefficients of a function

are the Fourier coefficients of a function  with respect to an orthonormal system

with respect to an orthonormal system  , then the sum at the right-hand side of (1) yields a linear method of orthogonal projection of

, then the sum at the right-hand side of (1) yields a linear method of orthogonal projection of  onto

onto  ; in this case

; in this case

|

Thus, this method yields the best approximation of  by linear combinations of the functions

by linear combinations of the functions  .

.

In the theory of linear approximation methods, special attention is given to problems of convergence. Let  be a Banach space, let

be a Banach space, let  be a linearly independent system of functions on

be a linearly independent system of functions on  , let

, let  be the subspace generated by the first

be the subspace generated by the first  functions of this system,

functions of this system,  and let

and let  be bounded linear operators from

be bounded linear operators from  into

into  ,

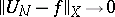

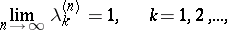

,  . The convergence

. The convergence  (in the sense that

(in the sense that  as

as  ) for any function

) for any function  in

in  holds if and only if: 1) the sequence of norms

holds if and only if: 1) the sequence of norms  of the operators

of the operators  is bounded (see Banach–Steinhaus theorem); and 2)

is bounded (see Banach–Steinhaus theorem); and 2)  for all functions

for all functions  in a set

in a set  that is everywhere dense in

that is everywhere dense in  . These conditions are satisfied, in particular, in the space of

. These conditions are satisfied, in particular, in the space of  -periodic functions

-periodic functions  , with

, with  , and the operators

, and the operators  defined by the trigonometric Fourier sums

defined by the trigonometric Fourier sums

| (2) |

|

of  . As the set

. As the set  on which 2) is satisfied one can take the set of all trigonometric polynomials. If

on which 2) is satisfied one can take the set of all trigonometric polynomials. If  is the space

is the space  (of continuous functions on the whole real axis with period

(of continuous functions on the whole real axis with period  ) or if

) or if  equals

equals  , then

, then  as

as  (see Lebesgue constants). Consequently, there are in

(see Lebesgue constants). Consequently, there are in  and in

and in  functions

functions  to which the sequence

to which the sequence  does not converge in the appropriate metric. Since in the Banach space of functions

does not converge in the appropriate metric. Since in the Banach space of functions  with a norm that is invariant with respect to translations the linear operator

with a norm that is invariant with respect to translations the linear operator  projecting

projecting  onto the subspace

onto the subspace  of trigonometric polynomials of degree at most

of trigonometric polynomials of degree at most  satisfies the inequality

satisfies the inequality  (see [3]), the divergence in

(see [3]), the divergence in  and

and  also holds for the sequence

also holds for the sequence  . In particular, this is true in

. In particular, this is true in  for the sequence of Lagrange interpolation operators with any triangular matrix of interpolation nodes. Similar facts of a negative character also hold in the non-periodic case for the linear projection operators of the spaces

for the sequence of Lagrange interpolation operators with any triangular matrix of interpolation nodes. Similar facts of a negative character also hold in the non-periodic case for the linear projection operators of the spaces  and

and  onto the subspaces

onto the subspaces  ,

,  of algebraic polynomials of degree at most

of algebraic polynomials of degree at most  .

.

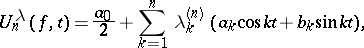

The divergence of the sequence (2) for some functions in  made it necessary to introduce and examine various averages of Fourier sums that do not have this disadvantage. Such are, for instance, the Fejér summation method and the Bernstein–Rogosinski summation method, which are particular cases (with a specific choice of the numerical coefficients

made it necessary to introduce and examine various averages of Fourier sums that do not have this disadvantage. Such are, for instance, the Fejér summation method and the Bernstein–Rogosinski summation method, which are particular cases (with a specific choice of the numerical coefficients  ) of polynomials of the form

) of polynomials of the form

| (3) |

|

Since

| (4) |

the averages (3) belong to the very wide class of linear approximation methods which can be represented in the form of a convolution of  with some (singular) kernel whose properties (in this case, the properties of the triangular matrix of numbers

with some (singular) kernel whose properties (in this case, the properties of the triangular matrix of numbers  ) determine the answer to the problem of convergence. If

) determine the answer to the problem of convergence. If

|

then the sums (3) converge uniformly when  is a trigonometric polynomial, and so 2) is satisfied. For the boundedness of the norm of

is a trigonometric polynomial, and so 2) is satisfied. For the boundedness of the norm of  as an operator from

as an operator from  into

into  , it is necessary that

, it is necessary that

|

and  , uniformly in

, uniformly in  and

and  . These conditions are sufficient as well for the boundedness of the sequence

. These conditions are sufficient as well for the boundedness of the sequence  if the matrix

if the matrix  is subject to some additional restrictions (for instance, convexity or concavity of the columns). Similar to (3), using the matrix of coefficients

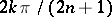

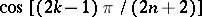

is subject to some additional restrictions (for instance, convexity or concavity of the columns). Similar to (3), using the matrix of coefficients  one can also construct averages by means of Fourier–Chebyshev sums (for

one can also construct averages by means of Fourier–Chebyshev sums (for  ) and also by means of the Lagrange interpolation polynomials with nodes

) and also by means of the Lagrange interpolation polynomials with nodes  (in the periodic case) or with nodes

(in the periodic case) or with nodes  on

on  (see, for instance, [6]).

(see, for instance, [6]).

The question of convergence of positive linear operators  acting from

acting from  into

into  or from

or from  into

into  (in particular, of operators of the form (4) with a positive kernel) is solved in terms of three test functions (see [1]): For the uniform convergence of the sequence

(in particular, of operators of the form (4) with a positive kernel) is solved in terms of three test functions (see [1]): For the uniform convergence of the sequence  to

to  or to

or to  it is necessary and sufficient that this occurs for the functions

it is necessary and sufficient that this occurs for the functions  ,

,  and

and  , or, respectively, for the functions

, or, respectively, for the functions  ,

,  ,

,  .

.

The investigation of the error of approximation by a linear approximation method leads, mostly, to studies on the rate of convergence of  to

to  , to estimates of the error in terms of the difference-differential properties of the approximated function, and to answering the question of how the linear approximation method behaves when the smoothness properties (of the approximated function) are improved.

, to estimates of the error in terms of the difference-differential properties of the approximated function, and to answering the question of how the linear approximation method behaves when the smoothness properties (of the approximated function) are improved.

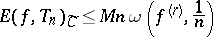

In the examination of approximating properties of the linear approximation method (1), a natural role is played by the best approximation  of

of  by elements of the subspace

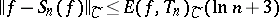

by elements of the subspace  . The Lebesgue inequality

. The Lebesgue inequality

| (5) |

shows, in connection with the Jackson inequality

|

(where  is the modulus of continuity of a function

is the modulus of continuity of a function  ), that, although the order of approximation by Fourier sums is slightly worse than the best approximation (

), that, although the order of approximation by Fourier sums is slightly worse than the best approximation ( in (5) cannot be replaced by a constant), these sums are affected by any increase of the order of differentiability of the function to be approximated. For some linear approximation methods the order of approximation cannot be higher than a certain quantity, no matter how many derivatives the function

in (5) cannot be replaced by a constant), these sums are affected by any increase of the order of differentiability of the function to be approximated. For some linear approximation methods the order of approximation cannot be higher than a certain quantity, no matter how many derivatives the function  may have (the phenomenon of saturation). Thus, the order of approximation by positive linear polynomial operators

may have (the phenomenon of saturation). Thus, the order of approximation by positive linear polynomial operators  cannot be higher then

cannot be higher then  ; for Fejér sums the saturation order is

; for Fejér sums the saturation order is  ; and for Bernstein–Rogosinski sums it is

; and for Bernstein–Rogosinski sums it is  . Interpolation splines with a specific choice of the knots (nodes, joints) ensure the best order of approximation not only for the function itself, but also for some of its derivatives, depending on the degree of the polynomials out of which the spline is composed (see [7], [8]).

. Interpolation splines with a specific choice of the knots (nodes, joints) ensure the best order of approximation not only for the function itself, but also for some of its derivatives, depending on the degree of the polynomials out of which the spline is composed (see [7], [8]).

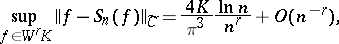

In some particular cases, for specific linear approximation methods exact or asymptotically-exact estimates for the errors of approximation were obtained for some classes of functions. Strictly speaking, the first non-trivial result of this kind was established by A.N. Kolmogorov, who proved in 1935 that

|

where  ,

,  is the class of functions

is the class of functions  for which

for which  is absolutely continuous on

is absolutely continuous on  , and for which almost everywhere

, and for which almost everywhere  . Subsequently, results of similar character were obtained for Fourier sums (and some of their averages) with respect to other important classes of functions, for instance, in terms of upper bounds on the modulus of continuity of the

. Subsequently, results of similar character were obtained for Fourier sums (and some of their averages) with respect to other important classes of functions, for instance, in terms of upper bounds on the modulus of continuity of the  -th derivative (see [2]), [6] and [9]). Special interest is given to linear approximation methods (1) yielding, on some class of functions, the least upper bound of the best approximation by the subspace

-th derivative (see [2]), [6] and [9]). Special interest is given to linear approximation methods (1) yielding, on some class of functions, the least upper bound of the best approximation by the subspace  . Such a property for the classes

. Such a property for the classes  ,

,  with a specific choice of the

with a specific choice of the  , applies to sums of the form (3). For instance, for

, applies to sums of the form (3). For instance, for  one should take

one should take

|

the property also applies to interpolation splines of order  and defect 1 with knots

and defect 1 with knots  ,

,  (see [4] and also Approximation of functions, extremal problems in function classes; Best linear method).

(see [4] and also Approximation of functions, extremal problems in function classes; Best linear method).

References

| [1] | P.P. Korovkin, "Linear operators and approximation theory" , Hindushtan Publ. Comp. (1960) (Translated from Russian) |

| [2] | V.K. Dzyadyk, "Introduction to the theory of uniform approximation of functions by polynomials" , Moscow (1977) (In Russian) |

| [3] | V.M. Tikhomirov, "Some problems in approximation theory" , Moscow (1976) (In Russian) |

| [4] | N.P. Korneichuk, "Extremal problems in approximation theory" , Moscow (1976) (In Russian) |

| [5] | V.L. Goncharov, "The theory of interpolation and approximation of functions" , Moscow (1954) (In Russian) |

| [6] | A.F. Timan, "Theory of approximation of functions of a real variable" , Pergamon (1963) (Translated from Russian) |

| [7] | J.H. Ahlberg, E.N. Nilson, J.F. Walsh, "The theory of splines and their applications" , Acad. Press (1967) |

| [8] | S.B. Stechkin, Yu.N. Subbotin, "Splines in numerical mathematics" , Moscow (1976) (In Russian) |

| [9] | A.I. Stepanets, "Uniform approximation by trigonometric polynomials. Linear methods" , Kiev (1981) (In Russian) |

| [10] | N.P. Korneichuk, "Splines in approximation theory" , Moscow (1976) (In Russian) |

| [11] | J.R. Rice, "The approximation of functions" , 1. Linear theory , Addison-Wesley (1964) |

| [12] | L.L. Schumaker, "Spline functions, basic theory" , Wiley (1981) |

| [13] | P.J. Davis, "Interpolation and approximation" , Dover, reprint (1963) |

Comments

The fact that three test functions are enough for uniform convergence of a sequence of positive linear operators from  into

into  (or from

(or from  into

into  ) can be found in, e.g., [a1], Chapt. 3, Sect. 3. The theorem is a generalization of the Bernstein theorem, and was obtained by H. Bohman [a2]. For integral operators it was obtained by P.P. Korovkin (see also [1]).

) can be found in, e.g., [a1], Chapt. 3, Sect. 3. The theorem is a generalization of the Bernstein theorem, and was obtained by H. Bohman [a2]. For integral operators it was obtained by P.P. Korovkin (see also [1]).

References

| [a1] | E.W. Cheney, "Introduction to approximation theory" , Chelsea, reprint (1982) |

| [a2] | H. Bohman, "On approximation of continuous and of analytic functions" Arkiv Mat. , 2 (1952) pp. 43–56 |

| [a3] | H. Kiesewetter, "Vorlesungen über lineare Approximation" , Deutsch. Verlag Wissenschaft. (1973) |

| [a4] | R.A. DeVore, "The approximation of continuous functions by positive linear operators" , Springer (1972) |

| [a5] | E.W. Cheney, G.R. Hobby, P.D. Morris, F. Schurer, D.E. Wubert, "On the minimal property of the Fourier projection" Trans. Amer. Math. Soc. , 143 (1969) pp. 249–258 |

Approximation of functions, linear methods. Encyclopedia of Mathematics. URL: http://encyclopediaofmath.org/index.php?title=Approximation_of_functions,_linear_methods&oldid=18726