Aitken Delta^2 process

One of the most famous methods for accelerating the convergence of a given sequence.

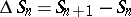

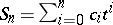

Let  be a sequence of numbers converging to

be a sequence of numbers converging to  . The Aitken

. The Aitken  process consists of transforming

process consists of transforming  into the new sequence

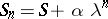

into the new sequence  defined, for

defined, for  , by

, by

|

where  and

and  .

.

The first formula above is numerically unstable since, when the terms are close to  , cancellation arises in the numerator and in the denominator. Of course, such a cancellation also occurs in the second formula, however only in the computation of a correcting term to

, cancellation arises in the numerator and in the denominator. Of course, such a cancellation also occurs in the second formula, however only in the computation of a correcting term to  . Thus, cancellation appears as a second-order error and it follows that the second formula is more stable than the first one, which is only used for theoretical purposes.

. Thus, cancellation appears as a second-order error and it follows that the second formula is more stable than the first one, which is only used for theoretical purposes.

Such a process was proposed in 1926 by A.C. Aitken, but it can be traced back to Japanese mathematicians of the 17th century.

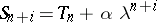

An important algebraic property of an extrapolation algorithm, such as Aitken's, is its kernel, that is the set of sequences which are transformed into a constant sequence. It is easy to see that the kernel of the Aitken  process is the set of sequences of the form

process is the set of sequences of the form  for

for  , with

, with  or, in other words, such that, for all

or, in other words, such that, for all  ,

,  with

with  . If

. If  ,

,  converges to

converges to  . However, it must be noted that this result is true even if

. However, it must be noted that this result is true even if  , that is, even if the sequence

, that is, even if the sequence  is diverging. In other words, the kernel is the set of sequences such that, for all

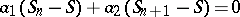

is diverging. In other words, the kernel is the set of sequences such that, for all  ,

,

| (a1) |

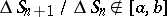

If the Aitken process is applied to such a sequence, then  for all

for all  .

.

This result also shows that the Aitken process is an extrapolation algorithm. Indeed, it consists of computing  ,

,  and

and  such that the interpolation conditions

such that the interpolation conditions  ,

,  , are satisfied.

, are satisfied.

Convergence of the sequence  .

.

If  is an arbitrary convergent sequence, the sequence

is an arbitrary convergent sequence, the sequence  obtained by the Aitken process can, in some cases, be non-convergent. Examples are known where

obtained by the Aitken process can, in some cases, be non-convergent. Examples are known where  has two cluster points. However, if the sequence

has two cluster points. However, if the sequence  converges, then its limit is also

converges, then its limit is also  , the limit of the sequence

, the limit of the sequence  . It can be proved that if there are

. It can be proved that if there are  ,

,  and

and  ,

,  , such that

, such that  for all

for all  , then

, then  converges to

converges to  .

.

Convergence acceleration properties of the Aitken process.

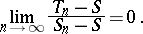

The problem here is to give conditions on  such that

such that

|

In that case,  is said to converge faster than

is said to converge faster than  or, in other words, that the Aitken process accelerates the convergence of

or, in other words, that the Aitken process accelerates the convergence of  .

.

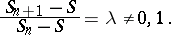

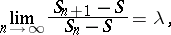

Intuitively, it is easy to understand that if the sequence  is not too far away from a sequence satisfying (a1), then its convergence will be accelerated. Indeed, if there is a

is not too far away from a sequence satisfying (a1), then its convergence will be accelerated. Indeed, if there is a  such that

such that

| (a2) |

then  will converge faster than

will converge faster than  . Sequences satisfying (a2) are called linear. So, the Aitken process accelerates the convergence of the set of linear sequences. Moreover, if, in addition,

. Sequences satisfying (a2) are called linear. So, the Aitken process accelerates the convergence of the set of linear sequences. Moreover, if, in addition,  , then

, then  converges faster than

converges faster than  . The condition

. The condition  is not a very restrictive one, since, when

is not a very restrictive one, since, when  , the sequence

, the sequence  already converges sufficiently fast and does not need to be accelerated. Note that it is important to prove the acceleration with respect to

already converges sufficiently fast and does not need to be accelerated. Note that it is important to prove the acceleration with respect to  with

with  as large as possible (in general,

as large as possible (in general,  corresponds to the last index used in the expression of

corresponds to the last index used in the expression of  that is, for the Aitken process

that is, for the Aitken process  ) since in certain cases it it possible that

) since in certain cases it it possible that  converges faster than

converges faster than  but not faster than

but not faster than  for some values of

for some values of  . The Aitken process is optimal for accelerating linear sequences, which means that it is not possible to accelerate the convergence of all linear sequences by a process using less than three successive terms of the sequence, and that the Aitken process is the only process using three terms that is able to do so [a2]. It is the preceding acceleration result which makes the Aitken

. The Aitken process is optimal for accelerating linear sequences, which means that it is not possible to accelerate the convergence of all linear sequences by a process using less than three successive terms of the sequence, and that the Aitken process is the only process using three terms that is able to do so [a2]. It is the preceding acceleration result which makes the Aitken  process so popular, since many sequences coming out of well-known numerical algorithms satisfy (a2). This is, in particular, the case for the Rayleigh quotient method for computing the dominant eigenvalue of a matrix, for the Bernoulli method for obtaining the dominant zero of a polynomial or for fixed-point iterations with linear convergence.

process so popular, since many sequences coming out of well-known numerical algorithms satisfy (a2). This is, in particular, the case for the Rayleigh quotient method for computing the dominant eigenvalue of a matrix, for the Bernoulli method for obtaining the dominant zero of a polynomial or for fixed-point iterations with linear convergence.

The Aitken process is also able to accelerate the convergence of some sequences for which  in (a2). Such sequences are called logarithmic. They converge more slowly than the linear ones and they are the most difficult sequences to accelerate. Note that an algorithm able to accelerate the convergence of all logarithmic sequences cannot exist.

in (a2). Such sequences are called logarithmic. They converge more slowly than the linear ones and they are the most difficult sequences to accelerate. Note that an algorithm able to accelerate the convergence of all logarithmic sequences cannot exist.

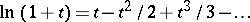

If the Aitken process is applied to the sequence  of partial sums of a series

of partial sums of a series  , then

, then  is identical to the Padé approximant

is identical to the Padé approximant  (cf. also Padé approximation). For example, apply the Aitken process to the sequence

(cf. also Padé approximation). For example, apply the Aitken process to the sequence  of partial sums of the series

of partial sums of the series  . It is well known that it converges for

. It is well known that it converges for  . So, for

. So, for  ,

,  terms of the series are needed to obtain

terms of the series are needed to obtain  with a precision of

with a precision of  . Applying the Aitken process to

. Applying the Aitken process to  , then again to

, then again to  and so on (a procedure called the iterated

and so on (a procedure called the iterated  process), this precision is achieved with only

process), this precision is achieved with only  terms. Quite similar results can be obtained for

terms. Quite similar results can be obtained for  , in which case the sequence

, in which case the sequence  is diverging.

is diverging.

There exist several generalizations of the Aitken  process for scalar sequences, the most well-known being the Shanks transformation, which is usually implemented via the

process for scalar sequences, the most well-known being the Shanks transformation, which is usually implemented via the  -algorithm of P. Wynn. There are also vector generalizations of the Aitken process, adapted more specifically to the acceleration of vector sequences.

-algorithm of P. Wynn. There are also vector generalizations of the Aitken process, adapted more specifically to the acceleration of vector sequences.

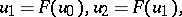

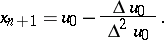

The Aitken process also leads to new methods in numerical analysis. For example, for solving the fixed-point problem  , consider the following method. It consists in applying the Aitken process to

, consider the following method. It consists in applying the Aitken process to  and

and  :

:

|

|

|

This is a method due to J.F. Steffensen and its convergence is quadratic (as in the Newton method) under the assumption that  (same assumption as in Newton's method).

(same assumption as in Newton's method).

For all these convergence acceleration methods, see [a1], [a2], [a3], [a4].

References

| [a1] | C. Brezinski, M. Redivo Zaglia, "Extrapolation methods. Theory and practice" , North-Holland (1991) |

| [a2] | J.P. Delahaye, "Sequence transformations" , Springer (1988) |

| [a3] | G. Walz, "Asymptotics and extrapolation" , Akad. Verlag (1996) |

| [a4] | J. Wimp, "Sequence transformations and their applications" , Acad. Press (1981) |

Aitken Delta^2 process. Encyclopedia of Mathematics. URL: http://encyclopediaofmath.org/index.php?title=Aitken_Delta%5E2_process&oldid=15250